Is this the expected quality after training 800k iterations? #262

Reference in New Issue

Block a user

Delete Branch "%!s()"

Deleting a branch is permanent. Although the deleted branch may continue to exist for a short time before it actually gets removed, it CANNOT be undone in most cases. Continue?

Hi, I have been training the Simswap model with the VGGFace2-224 dataset for a few days up to 800k iterations as suggested, the model training crashed due to a problem unrelated to the code (I think) just before it reached the 300k iterations, so I used the flags to load the checkpoint and continue training. I noticed that the output right before the model crash was decent with some face swapping but after continuing the training and by the time it reached 800k the face swapping seems negligible.

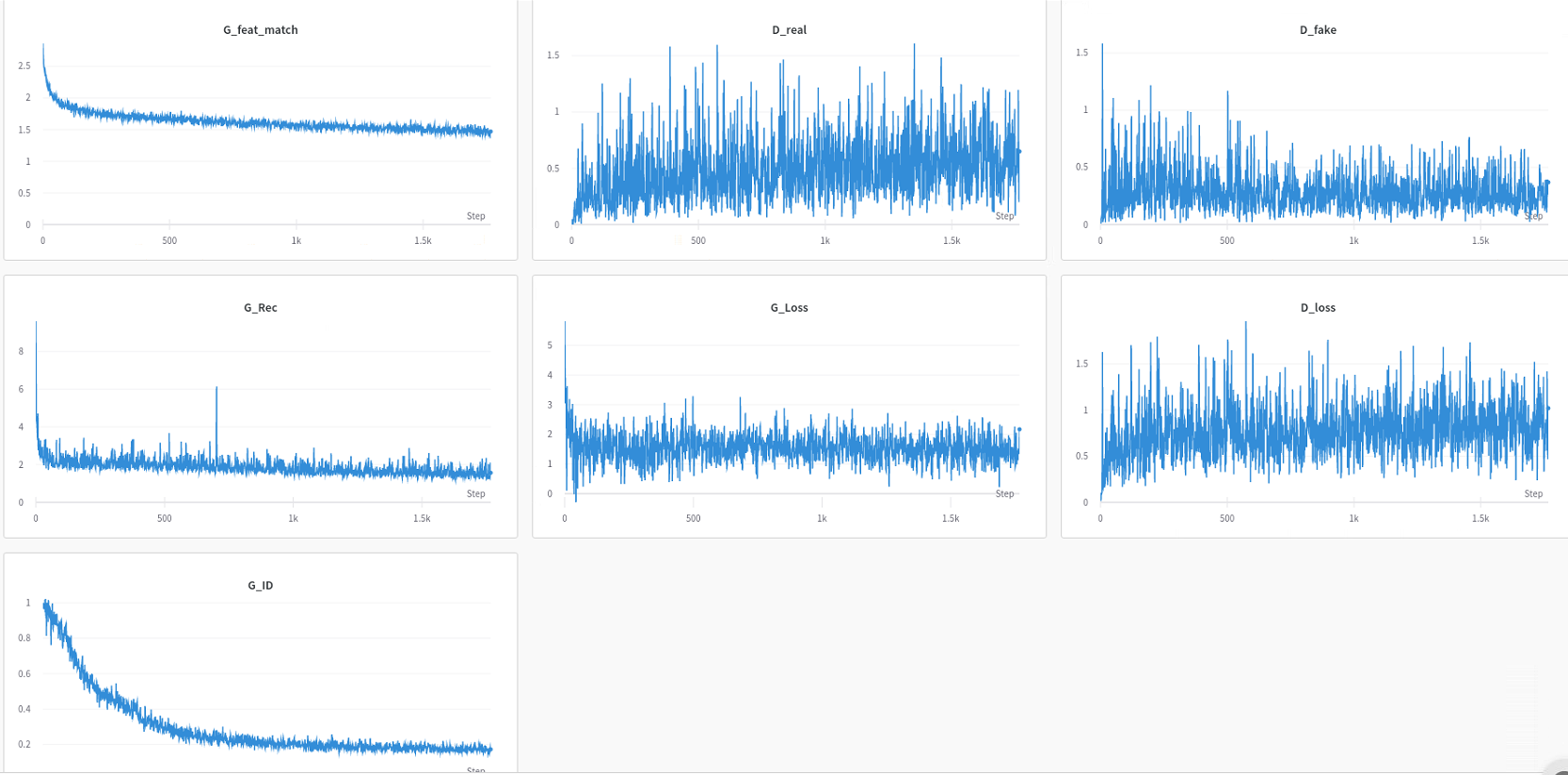

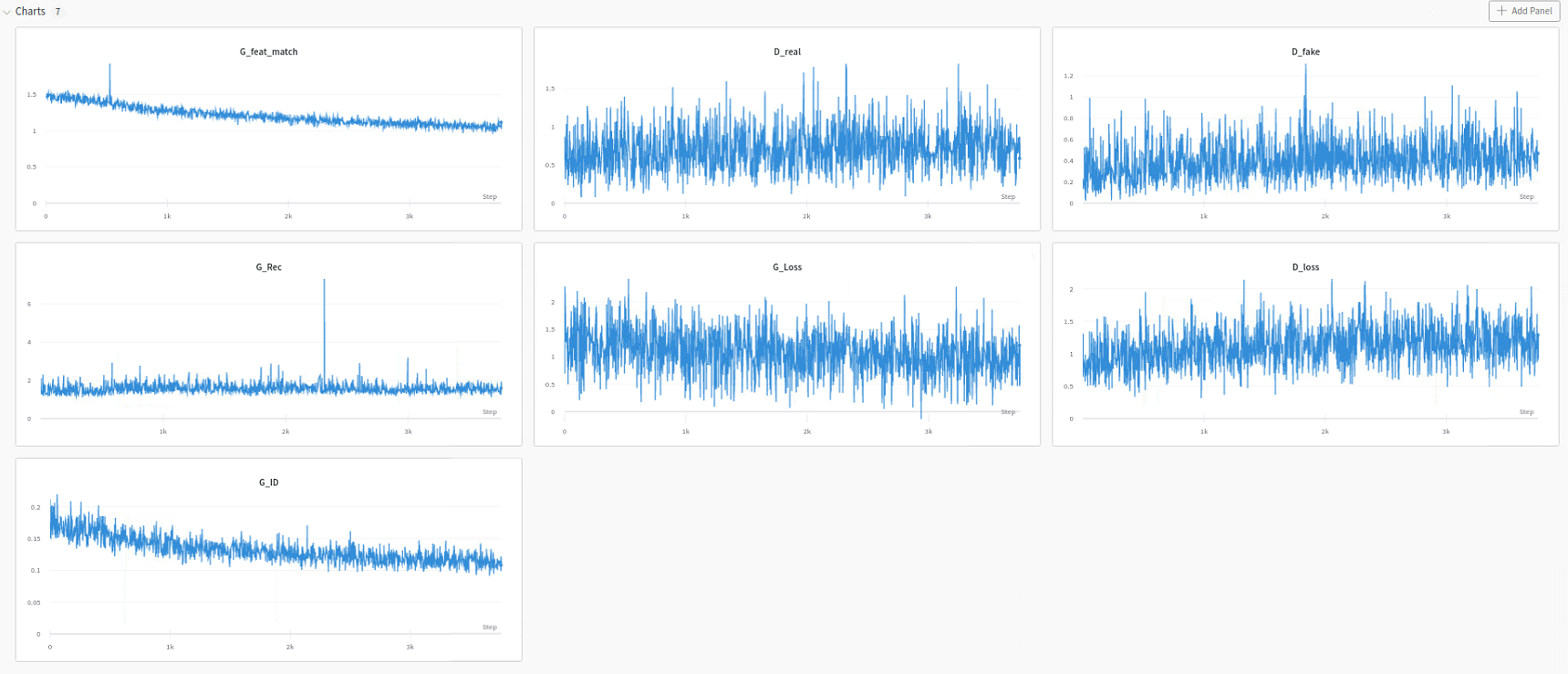

I monitor the losses in wandb and after it continues training the losses seem at the same spot where they were before and slowly decrease like always so I assumed it works as intended.

I use batch size 16 and one RTX3090.

tldr: Are these results normal after this many iterations or is the saving/loading of checkpoints not working properly?

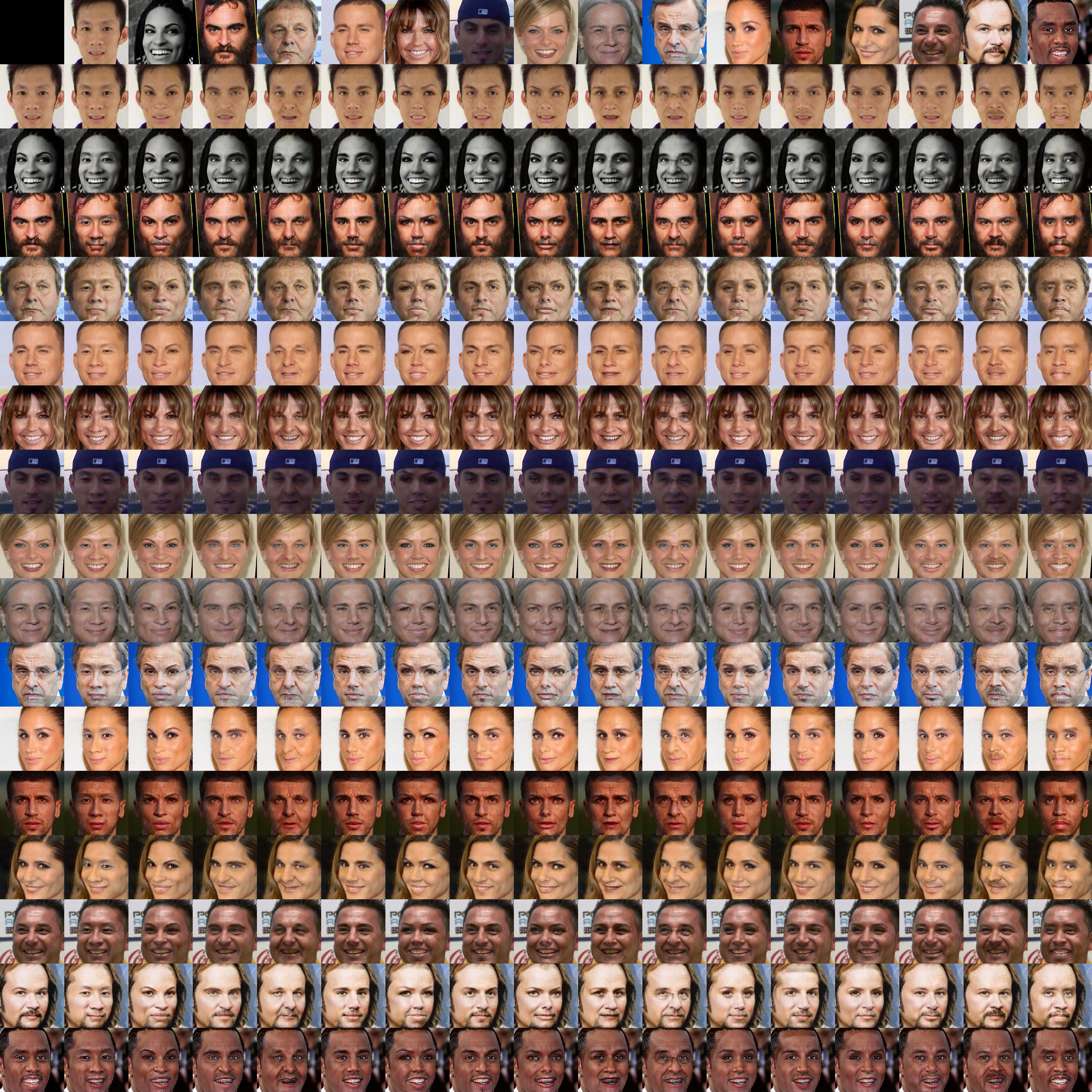

Output at iteration 291k:

Losses up to 291k iter

Output at iteration 800k:

Losses from 291k to 900k iter

Mind sharing the model?