mirror of

https://github.com/facefusion/facefusion-labs.git

synced 2026-05-22 23:59:40 +02:00

Final rename for everything

This commit is contained in:

@@ -1,5 +1,5 @@

|

||||

[flake8]

|

||||

select = E22, E23, E24, E27, E3, E4, E7, F, I1, I2

|

||||

plugins = flake8-import-order

|

||||

application_import_names = embedding_converter, face_swapper

|

||||

application_import_names = crossface, hyperswap

|

||||

import-order-style = pycharm

|

||||

|

||||

|

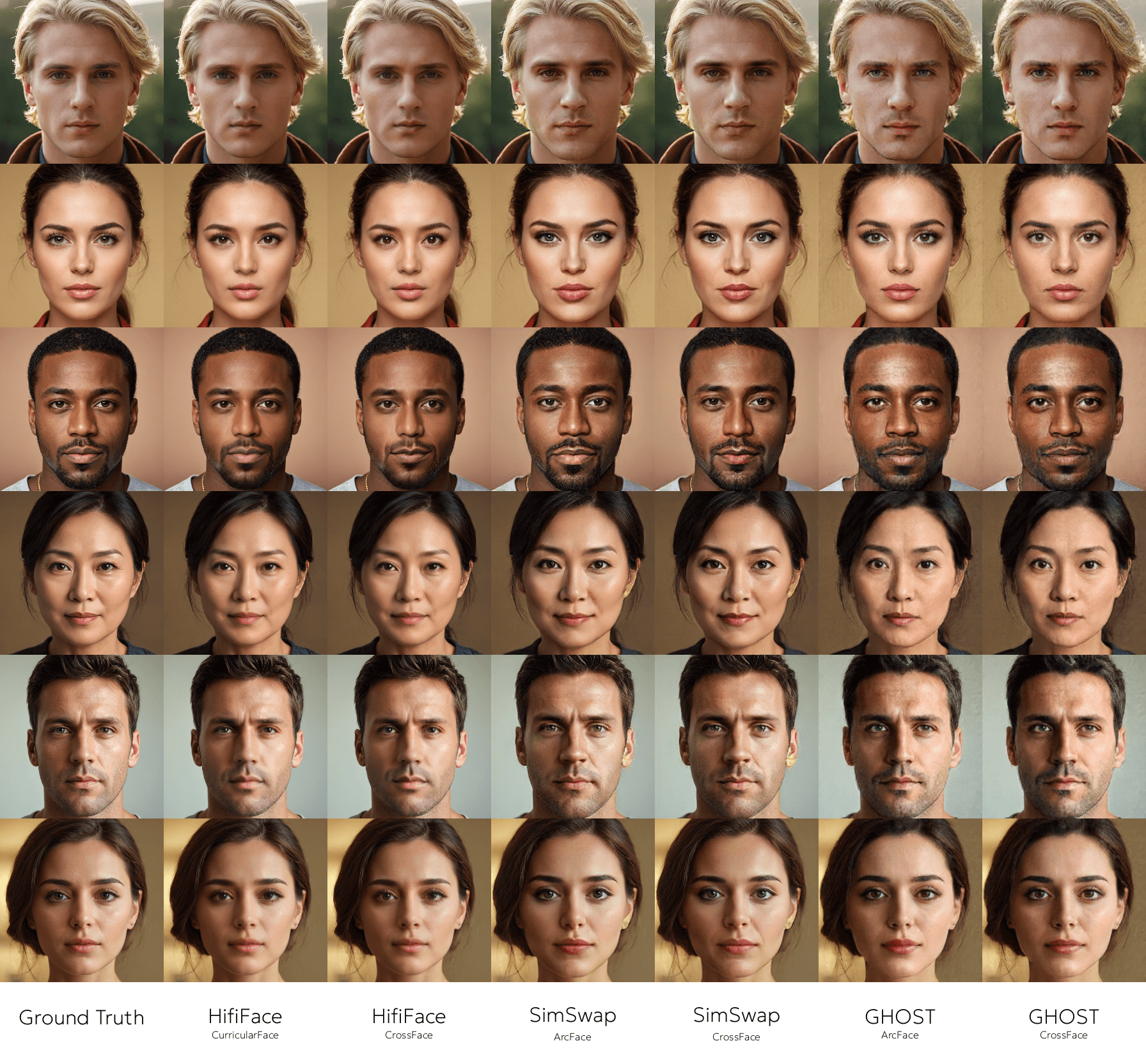

Before Width: | Height: | Size: 1.3 MiB After Width: | Height: | Size: 1.3 MiB |

|

Before Width: | Height: | Size: 5.2 MiB After Width: | Height: | Size: 5.2 MiB |

@@ -15,8 +15,8 @@ jobs:

|

||||

- run: pip install flake8

|

||||

- run: pip install flake8-import-order

|

||||

- run: pip install mypy

|

||||

- run: flake8 embedding_converter face_swapper

|

||||

- run: mypy embedding_converter face_swapper

|

||||

- run: flake8 crossface hyperswap

|

||||

- run: mypy crossface hyperswap

|

||||

test:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

|

||||

@@ -1,7 +1,7 @@

|

||||

Embedding Converter

|

||||

===================

|

||||

CrossFace

|

||||

=========

|

||||

|

||||

> Convert face embeddings between various models.

|

||||

> Seamless transform face embeddings across embedder models.

|

||||

|

||||

|

||||

|

||||

@@ -9,7 +9,7 @@ Embedding Converter

|

||||

Preview

|

||||

-------

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

Installation

|

||||

@@ -23,7 +23,7 @@ pip install -r requirements.txt

|

||||

Setup

|

||||

-----

|

||||

|

||||

This `config.ini` utilizes the MegaFace dataset to train the Embedding Converter for SimSwap.

|

||||

This `config.ini` utilizes the MegaFace dataset to train the CrossFace model for SimSwap.

|

||||

|

||||

```

|

||||

[training.dataset]

|

||||

@@ -50,13 +50,13 @@ max_epochs = 4096

|

||||

strategy = auto

|

||||

precision = 16-mixed

|

||||

logger_path = .logs

|

||||

logger_name = arcface_converter_simswap

|

||||

logger_name = crossface_simswap

|

||||

```

|

||||

|

||||

```

|

||||

[training.output]

|

||||

directory_path = .outputs

|

||||

file_pattern = arcface_converter_simswap_{epoch}_{step}

|

||||

file_pattern = crossface_simswap_{epoch}_{step}

|

||||

resume_path = .outputs/last.ckpt

|

||||

```

|

||||

|

||||

@@ -64,7 +64,7 @@ resume_path = .outputs/last.ckpt

|

||||

[exporting]

|

||||

directory_path = .exports

|

||||

source_path = .outputs/last.ckpt

|

||||

target_path = .exports/arcface_converter_simswap.onnx

|

||||

target_path = .exports/crossface_simswap.onnx

|

||||

ir_version = 10

|

||||

opset_version = 15

|

||||

```

|

||||

@@ -73,7 +73,7 @@ opset_version = 15

|

||||

Training

|

||||

--------

|

||||

|

||||

Train the Embedding Converter model.

|

||||

Train the model.

|

||||

|

||||

```

|

||||

python train.py

|

||||

@@ -3,7 +3,7 @@ from configparser import ConfigParser

|

||||

|

||||

import torch

|

||||

|

||||

from .training import EmbeddingConverterTrainer

|

||||

from .training import CrossFaceTrainer

|

||||

|

||||

CONFIG_PARSER = ConfigParser()

|

||||

CONFIG_PARSER.read('config.ini')

|

||||

@@ -17,7 +17,7 @@ def export() -> None:

|

||||

config_opset_version = CONFIG_PARSER.getint('exporting', 'opset_version')

|

||||

|

||||

os.makedirs(config_directory_path, exist_ok = True)

|

||||

model = EmbeddingConverterTrainer.load_from_checkpoint(config_source_path, map_location = 'cpu').eval()

|

||||

model = CrossFaceTrainer.load_from_checkpoint(config_source_path, map_location ='cpu').eval()

|

||||

model.ir_version = torch.tensor(config_ir_version)

|

||||

input_tensor = torch.randn(1, 512)

|

||||

torch.onnx.export(model, input_tensor, config_target_path, input_names = [ 'input' ], output_names = [ 'output' ], opset_version = config_opset_version)

|

||||

+1

-1

@@ -2,7 +2,7 @@ import torch

|

||||

from torch import Tensor, nn

|

||||

|

||||

|

||||

class EmbeddingConverter(nn.Module):

|

||||

class CrossFace(nn.Module):

|

||||

def __init__(self) -> None:

|

||||

super().__init__()

|

||||

self.layers = self.create_layers()

|

||||

@@ -11,26 +11,26 @@ from torch.utils.data import Dataset, random_split

|

||||

from torchdata.stateful_dataloader import StatefulDataLoader

|

||||

|

||||

from .dataset import StaticDataset

|

||||

from .models.embedding_converter import EmbeddingConverter

|

||||

from .models.crossface import CrossFace

|

||||

from .types import Batch, Embedding, OptimizerSet

|

||||

|

||||

CONFIG_PARSER = ConfigParser()

|

||||

CONFIG_PARSER.read('config.ini')

|

||||

|

||||

|

||||

class EmbeddingConverterTrainer(LightningModule):

|

||||

class CrossFaceTrainer(LightningModule):

|

||||

def __init__(self, config_parser : ConfigParser) -> None:

|

||||

super().__init__()

|

||||

self.config_source_path = config_parser.get('training.model', 'source_path')

|

||||

self.config_target_path = config_parser.get('training.model', 'target_path')

|

||||

self.config_learning_rate = config_parser.getfloat('training.trainer', 'learning_rate')

|

||||

self.embedding_converter = EmbeddingConverter()

|

||||

self.crossface = CrossFace()

|

||||

self.source_embedder = torch.jit.load(self.config_source_path, map_location = 'cpu').eval()

|

||||

self.target_embedder = torch.jit.load(self.config_target_path, map_location = 'cpu').eval()

|

||||

self.mse_loss = nn.MSELoss()

|

||||

|

||||

def forward(self, source_embedding : Embedding) -> Embedding:

|

||||

return self.embedding_converter(source_embedding)

|

||||

return self.crossface(source_embedding)

|

||||

|

||||

def training_step(self, batch : Batch, batch_index : int) -> Tensor:

|

||||

with torch.no_grad():

|

||||

@@ -125,10 +125,10 @@ def train() -> None:

|

||||

|

||||

dataset = StaticDataset(CONFIG_PARSER)

|

||||

training_loader, validation_loader = create_loaders(dataset)

|

||||

embedding_converter_trainer = EmbeddingConverterTrainer(CONFIG_PARSER)

|

||||

crossface_trainer = CrossFaceTrainer(CONFIG_PARSER)

|

||||

trainer = create_trainer()

|

||||

|

||||

if os.path.exists(config_resume_path):

|

||||

trainer.fit(embedding_converter_trainer, training_loader, validation_loader, ckpt_path = config_resume_path)

|

||||

trainer.fit(crossface_trainer, training_loader, validation_loader, ckpt_path = config_resume_path)

|

||||

else:

|

||||

trainer.fit(embedding_converter_trainer, training_loader, validation_loader)

|

||||

trainer.fit(crossface_trainer, training_loader, validation_loader)

|

||||

@@ -1,7 +1,7 @@

|

||||

Face Swapper

|

||||

============

|

||||

HyperSwap

|

||||

=========

|

||||

|

||||

> Face shape and occlusion aware identity transfer.

|

||||

> Hyper accurate face swapping for everyone.

|

||||

|

||||

|

||||

|

||||

@@ -23,12 +23,12 @@ pip install -r requirements.txt

|

||||

Setup

|

||||

-----

|

||||

|

||||

This `config.ini` utilizes the VGGFace2 dataset to train the Face Swapper model.

|

||||

This `config.ini` utilizes the VGGFace2 dataset to train the HyperSwap model.

|

||||

|

||||

```

|

||||

[training.dataset]

|

||||

file_pattern = .datasets/vggface2/**/*.jpg

|

||||

warp_template = vgg_face_hq_to_arcface_128_v2

|

||||

warp_template = vggfacehq_256_to_arcface_128_v2

|

||||

transform_size = 256

|

||||

batch_mode = equal

|

||||

batch_ratio = 0.2

|

||||

@@ -92,14 +92,14 @@ max_epochs = 50

|

||||

strategy = auto

|

||||

precision = 16-mixed

|

||||

logger_path = .logs

|

||||

logger_name = face_swapper

|

||||

logger_name = hyperswap

|

||||

preview_frequency = 100

|

||||

```

|

||||

|

||||

```

|

||||

[training.output]

|

||||

directory_path = .outputs

|

||||

file_pattern = face_swapper_{epoch}_{step}

|

||||

file_pattern = hyperswap_{epoch}_{step}

|

||||

resume_path = .outputs/last.ckpt

|

||||

```

|

||||

|

||||

@@ -127,7 +127,7 @@ output_path = .outputs/output.jpg

|

||||

Training

|

||||

--------

|

||||

|

||||

Train the Face Swapper model.

|

||||

Train the model.

|

||||

|

||||

```

|

||||

python train.py

|

||||

@@ -5,7 +5,7 @@ from typing import Tuple

|

||||

import torch

|

||||

from torch import Tensor, nn

|

||||

|

||||

from .training import FaceSwapperTrainer

|

||||

from .training import HyperSwapTrainer

|

||||

from .types import Embedding, Mask, Module

|

||||

|

||||

CONFIG_PARSER = ConfigParser()

|

||||

@@ -36,7 +36,7 @@ def export() -> None:

|

||||

config_precision = CONFIG_PARSER.get('exporting', 'precision')

|

||||

|

||||

os.makedirs(config_directory_path, exist_ok = True)

|

||||

model = FaceSwapperTrainer.load_from_checkpoint(config_source_path, config_parser = CONFIG_PARSER, map_location = 'cpu').eval()

|

||||

model = HyperSwapTrainer.load_from_checkpoint(config_source_path, config_parser = CONFIG_PARSER, map_location ='cpu').eval()

|

||||

|

||||

if config_precision == 'half':

|

||||

model = HalfPrecision(model).eval()

|

||||

@@ -10,12 +10,12 @@ WARP_TEMPLATE_SET : WarpTemplateSet =\

|

||||

[ 8.75000016e-01, -1.07193451e-08, 3.80446920e-10 ],

|

||||

[ 1.07193451e-08, 8.75000016e-01, -1.25000007e-01 ]

|

||||

]),

|

||||

'ffhq_to_arcface_128_v2': torch.tensor(

|

||||

'ffhq_512_to_arcface_128_v2': torch.tensor(

|

||||

[

|

||||

[ 8.50048894e-01, -1.29486822e-04, 1.90956388e-03 ],

|

||||

[ 1.29486822e-04, 8.50048894e-01, 9.56254653e-02 ]

|

||||

]),

|

||||

'vgg_face_hq_to_arcface_128_v2': torch.tensor(

|

||||

'vggfacehq_256_to_arcface_128_v2': torch.tensor(

|

||||

[

|

||||

[ 1.01305414, -0.00140513, -0.00585911 ],

|

||||

[ 0.00140513, 1.01305414, 0.11169602 ]

|

||||

@@ -4,7 +4,7 @@ import torch

|

||||

from torchvision import io

|

||||

|

||||

from .helper import calc_embedding

|

||||

from .training import FaceSwapperTrainer

|

||||

from .training import HyperSwapTrainer

|

||||

|

||||

CONFIG_PARSER = configparser.ConfigParser()

|

||||

CONFIG_PARSER.read('config.ini')

|

||||

@@ -17,7 +17,7 @@ def infer() -> None:

|

||||

config_target_path = CONFIG_PARSER.get('inferencing', 'target_path')

|

||||

config_output_path = CONFIG_PARSER.get('inferencing', 'output_path')

|

||||

|

||||

generator = FaceSwapperTrainer.load_from_checkpoint(config_generator_path, config_parser = CONFIG_PARSER, map_location = 'cpu').eval()

|

||||

generator = HyperSwapTrainer.load_from_checkpoint(config_generator_path, config_parser = CONFIG_PARSER, map_location ='cpu').eval()

|

||||

embedder = torch.jit.load(config_embedder_path, map_location = 'cpu').eval()

|

||||

|

||||

source_tensor = io.read_image(config_source_path)

|

||||

@@ -25,7 +25,7 @@ CONFIG_PARSER = ConfigParser()

|

||||

CONFIG_PARSER.read('config.ini')

|

||||

|

||||

|

||||

class FaceSwapperTrainer(LightningModule):

|

||||

class HyperSwapTrainer(LightningModule):

|

||||

def __init__(self, config_parser : ConfigParser) -> None:

|

||||

super().__init__()

|

||||

self.config_generator_embedder_path = config_parser.get('training.model', 'generator_embedder_path')

|

||||

@@ -239,10 +239,10 @@ def train() -> None:

|

||||

|

||||

dataset = ConcatDataset(prepare_datasets(CONFIG_PARSER))

|

||||

training_loader, validation_loader = create_loaders(dataset)

|

||||

face_swapper_trainer = FaceSwapperTrainer(CONFIG_PARSER)

|

||||

hyperswap_trainer = HyperSwapTrainer(CONFIG_PARSER)

|

||||

trainer = create_trainer()

|

||||

|

||||

if os.path.isfile(config_resume_path):

|

||||

trainer.fit(face_swapper_trainer, training_loader, validation_loader, ckpt_path = config_resume_path)

|

||||

trainer.fit(hyperswap_trainer, training_loader, validation_loader, ckpt_path = config_resume_path)

|

||||

else:

|

||||

trainer.fit(face_swapper_trainer, training_loader, validation_loader)

|

||||

trainer.fit(hyperswap_trainer, training_loader, validation_loader)

|

||||

@@ -20,5 +20,5 @@ FaceMaskerModule : TypeAlias = Module

|

||||

|

||||

OptimizerSet : TypeAlias = Any

|

||||

|

||||

WarpTemplate = Literal['arcface_128_v2_to_arcface_112_v2', 'ffhq_to_arcface_128_v2', 'vgg_face_hq_to_arcface_128_v2']

|

||||

WarpTemplate = Literal['arcface_128_v2_to_arcface_112_v2', 'ffhq_512_to_arcface_128_v2', 'vggfacehq_256_to_arcface_128_v2']

|

||||

WarpTemplateSet : TypeAlias = Dict[WarpTemplate, Tensor]

|

||||

@@ -3,9 +3,9 @@ from configparser import ConfigParser

|

||||

import pytest

|

||||

import torch

|

||||

|

||||

from face_swapper.src.networks.aad import AAD

|

||||

from face_swapper.src.networks.masknet import MaskNet

|

||||

from face_swapper.src.networks.unet import UNet

|

||||

from hyperswap.src.networks.aad import AAD

|

||||

from hyperswap.src.networks.masknet import MaskNet

|

||||

from hyperswap.src.networks.unet import UNet

|

||||

|

||||

|

||||

@pytest.mark.parametrize('output_size', [ 128, 256, 512 ])

|

||||

Reference in New Issue

Block a user