mirror of

https://github.com/facefusion/facefusion-labs.git

synced 2026-05-22 23:59:40 +02:00

2e6394565a

* add sync batchnorm * replace random.choice with hash * fifty percent reduction * fix discriminator input * restore dataset.py * Remove duplicates * add discriminator_ratio to config * fix onnx export bug: replace round() with int() * Fix embedding naming * Introduce ModelWithConfigCheckpoint callback (#86) * Fix dist ini * Style: Refactor typing and improve code clarity in training.py (#88) * Add type casting for trainer params * Add type casting for trainer params * Add type casting for trainer params * Remove inplace activations for torch.compile compatibility (#89) * Fix README * improvise with norm layers & weighted average * add skip layer * use gelu instead of leaky_relu * cleanup * cleanup * Update dependencies * Different defaults and enable validation * Different defaults and enable validation * Revert to higher batch size * Just use copy over copy2 --------- Co-authored-by: harisreedhar <h4harisreedhar.s.s@gmail.com> Co-authored-by: NeuroDonu <112660822+NeuroDonu@users.noreply.github.com> Co-authored-by: Harisreedhar <46858047+harisreedhar@users.noreply.github.com>

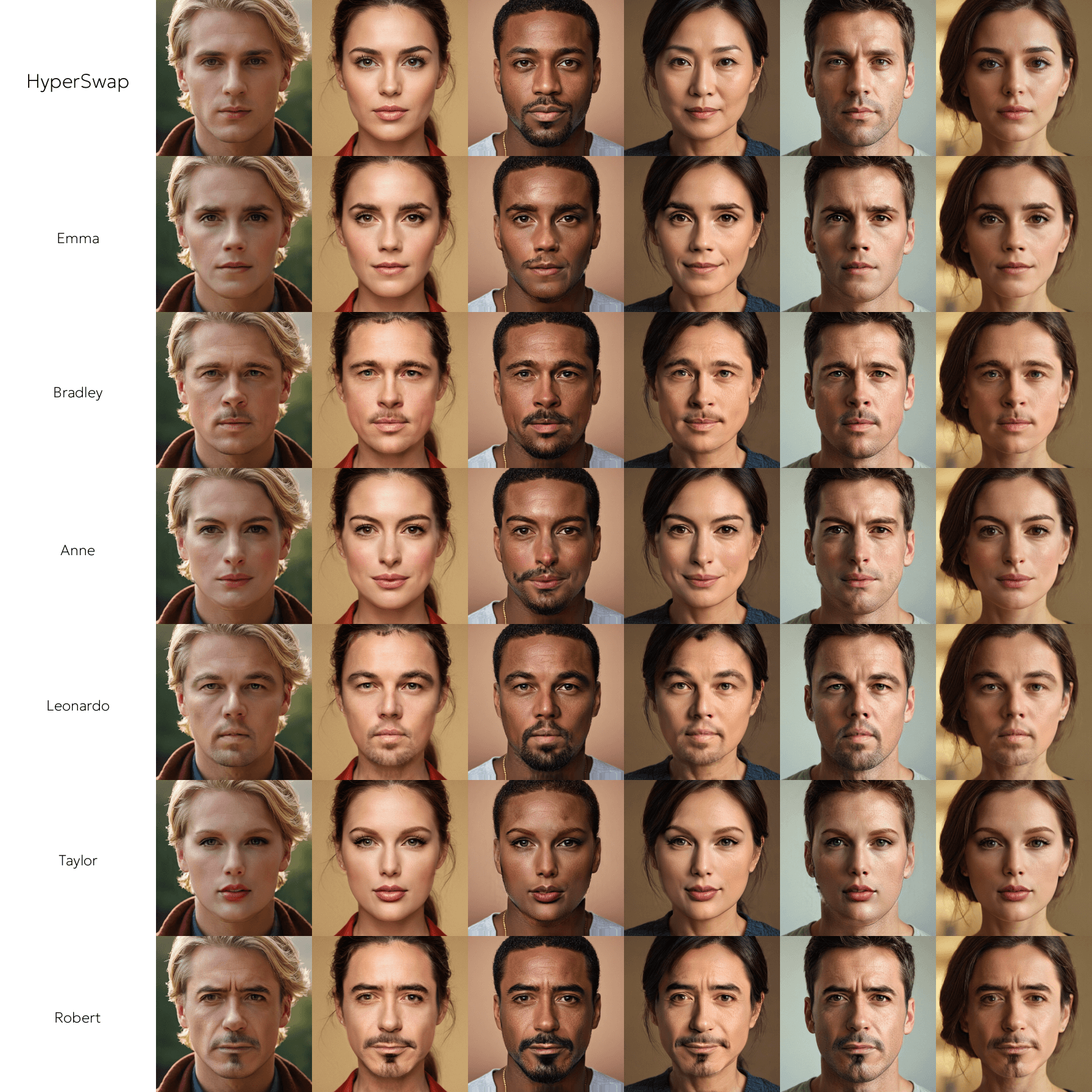

HyperSwap

Hyper accurate face swapping for everyone.

Preview

Installation

pip install -r requirements.txt

Setup

This config.ini utilizes the VGGFace2 dataset to train the HyperSwap model.

[training.dataset]

file_pattern = .datasets/vggface2/**/*.jpg

convert_template = vggfacehq_512_to_arcface_128

multiplier = 1

transform_size = 256

usage_mode = both

batch_mode = same

batch_ratio = 0.2

[training.loader]

batch_size = 8

num_workers = 8

split_ratio = 0.9995

[training.model]

generator_embedder_path = .models/blendface.pt

loss_embedder_path = .models/arcface.pt

gazer_path = .models/gazer.pt

face_masker_path = .models/face_masker.pt

[training.model.generator]

source_channels = 512

output_size = 256

num_blocks = 2

[training.model.discriminator]

input_channels = 3

num_filters = 64

num_layers = 5

num_discriminators = 3

kernel_size = 4

[training.model.masker]

input_channels = 67

output_channels = 1

num_filters = 16

[training.losses]

adversarial_weight = 1.0

cycle_weight = 1.0

feature_weight = 10.0

reconstruction_weight = 10.0

identity_weight = 20.0

gaze_weight = 0.05

mask_weight = 5.0

[training.trainer]

accumulate_size = 4

discriminator_ratio = 0.4

gradient_clip = 20.0

max_epochs = 50

strategy = auto

precision = 16-mixed

sync_batchnorm = false

preview_frequency = 100

[training.modifier]

mask_factor = 0.01

noise_factor = 0.05

[training.optimizer.generator]

learning_rate = 0.0004

momentum = 0.5

scheduler_factor = 0.7

scheduler_patience = 2000

[training.optimizer.discriminator]

learning_rate = 0.0002

momentum = 0.5

scheduler_factor = 0.7

scheduler_patience = 2000

[training.logger]

logger_path = .logs

logger_name = hyperswap

[training.output]

directory_path = .outputs

file_pattern = hyperswap_{epoch}_{step}

resume_path = .outputs/last.ckpt

[exporting]

directory_path = .exports

source_path = .outputs/last.ckpt

target_path = .exports/hyperswap_256.onnx

target_size = 256

ir_version = 10

opset_version = 15

precision = full

[inferencing]

generator_path = .outputs/last.ckpt

embedder_path = .models/arcface.pt

source_path = .assets/source.jpg

target_path = .assets/target.jpg

output_path = .outputs/output.jpg

Training

Train the model.

python train.py

Launch the TensorBoard to monitor the training.

tensorboard --logdir .logs

Exporting

Export the model to ONNX.

python export.py

Inferencing

Inference the model.

python infer.py