mirror of

https://github.com/facefusion/facefusion.git

synced 2026-04-29 13:05:59 +02:00

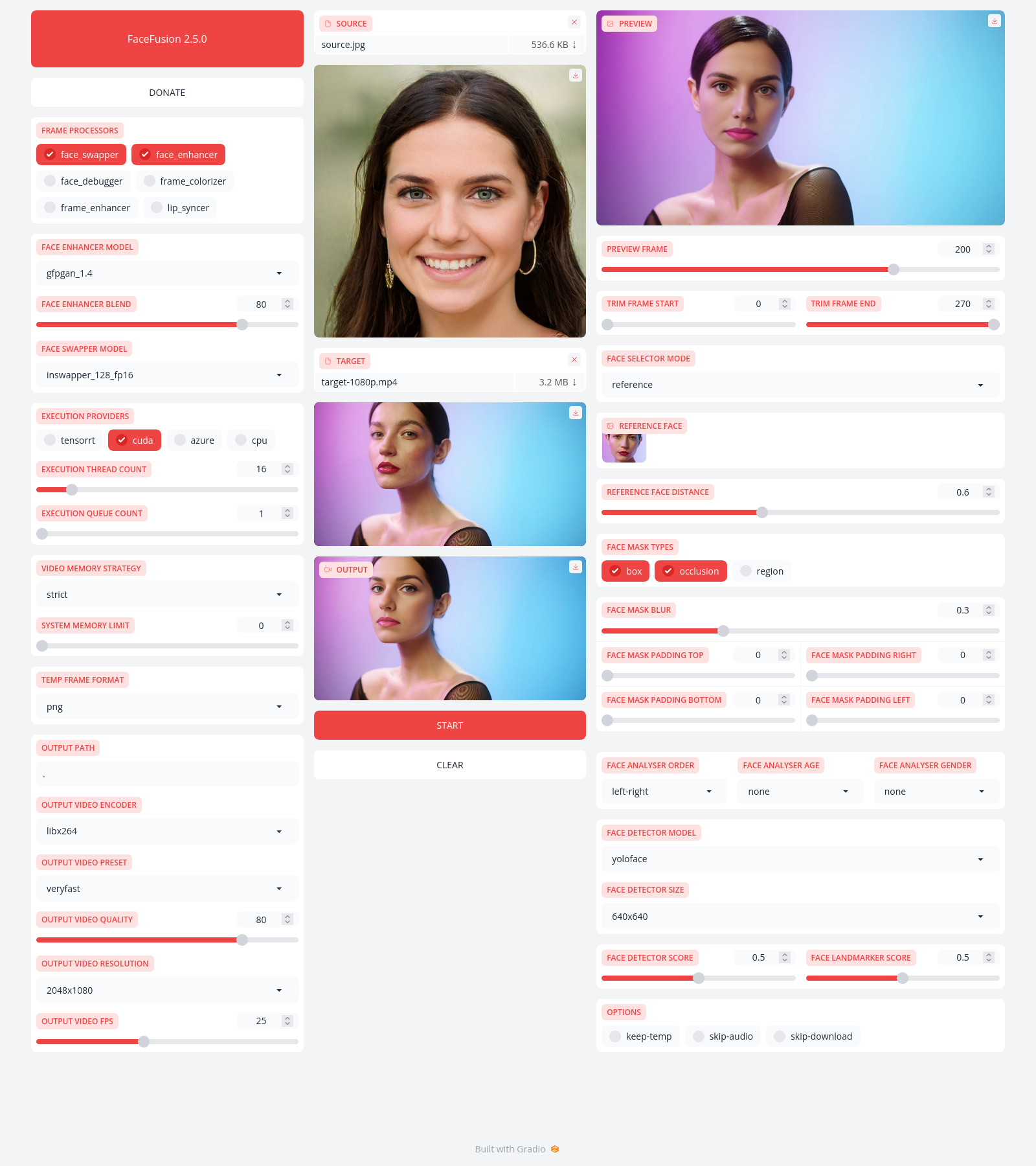

Feels so good to get rid of Gradio (#978)

This commit is contained in:

Binary file not shown.

|

Before Width: | Height: | Size: 1.3 MiB |

@@ -8,12 +8,6 @@ FaceFusion

|

||||

|

||||

|

||||

|

||||

Preview

|

||||

-------

|

||||

|

||||

|

||||

|

||||

|

||||

Installation

|

||||

------------

|

||||

|

||||

@@ -34,7 +28,6 @@ options:

|

||||

|

||||

commands:

|

||||

run run the program

|

||||

headless-run run the program in headless mode

|

||||

batch-run run the program in batch mode

|

||||

force-download force automate downloads and exit

|

||||

benchmark benchmark the program

|

||||

|

||||

@@ -104,11 +104,6 @@ frame_enhancer_blend =

|

||||

lip_syncer_model =

|

||||

lip_syncer_weight =

|

||||

|

||||

[uis]

|

||||

open_browser =

|

||||

ui_layouts =

|

||||

ui_workflow =

|

||||

|

||||

[download]

|

||||

download_providers =

|

||||

download_scope =

|

||||

|

||||

@@ -10,7 +10,7 @@ def detect_app_context() -> AppContext:

|

||||

while frame:

|

||||

if os.path.join('facefusion', 'jobs') in frame.f_code.co_filename:

|

||||

return 'cli'

|

||||

if os.path.join('facefusion', 'uis') in frame.f_code.co_filename:

|

||||

return 'ui'

|

||||

if os.path.join('facefusion', 'apis') in frame.f_code.co_filename:

|

||||

return 'api'

|

||||

frame = frame.f_back

|

||||

return 'cli'

|

||||

|

||||

+1

-4

@@ -64,10 +64,7 @@ def apply_args(args : Args, apply_state_item : ApplyStateItem) -> None:

|

||||

|

||||

for processor_module in get_processors_modules(available_processors):

|

||||

processor_module.apply_args(args, apply_state_item)

|

||||

|

||||

apply_state_item('open_browser', args.get('open_browser'))

|

||||

apply_state_item('ui_layouts', args.get('ui_layouts'))

|

||||

apply_state_item('ui_workflow', args.get('ui_workflow'))

|

||||

# execution

|

||||

apply_state_item('execution_device_ids', args.get('execution_device_ids'))

|

||||

apply_state_item('execution_providers', args.get('execution_providers'))

|

||||

apply_state_item('execution_thread_count', args.get('execution_thread_count'))

|

||||

|

||||

@@ -148,7 +148,6 @@ log_level_set : LogLevelSet =\

|

||||

}

|

||||

log_levels : List[LogLevel] = list(get_args(LogLevel))

|

||||

|

||||

ui_workflows : List[UiWorkflow] = list(get_args(UiWorkflow))

|

||||

job_statuses : List[JobStatus] = list(get_args(JobStatus))

|

||||

|

||||

benchmark_cycle_count_range : Sequence[int] = create_int_range(1, 10, 1)

|

||||

|

||||

@@ -62,17 +62,6 @@ def route(args : Args) -> None:

|

||||

hard_exit(error_code)

|

||||

|

||||

if state_manager.get_item('command') == 'run':

|

||||

import facefusion.uis.core as ui

|

||||

|

||||

if not common_pre_check() or not processors_pre_check():

|

||||

hard_exit(2)

|

||||

for ui_layout in ui.get_ui_layouts_modules(state_manager.get_item('ui_layouts')):

|

||||

if not ui_layout.pre_check():

|

||||

hard_exit(2)

|

||||

ui.init()

|

||||

ui.launch()

|

||||

|

||||

if state_manager.get_item('command') == 'headless-run':

|

||||

if not job_manager.init_jobs(state_manager.get_item('jobs_path')):

|

||||

hard_exit(1)

|

||||

error_code = process_headless(args)

|

||||

|

||||

@@ -17,7 +17,7 @@ from facefusion.types import DownloadSet, ExecutionProvider, InferencePool, Infe

|

||||

INFERENCE_POOL_SET : InferencePoolSet =\

|

||||

{

|

||||

'cli': {},

|

||||

'ui': {}

|

||||

'api': {}

|

||||

}

|

||||

|

||||

|

||||

@@ -31,10 +31,10 @@ def get_inference_pool(module_name : str, model_names : List[str], model_source_

|

||||

for execution_device_id in execution_device_ids:

|

||||

inference_context = get_inference_context(module_name, model_names, execution_device_id, execution_providers)

|

||||

|

||||

if app_context == 'cli' and INFERENCE_POOL_SET.get('ui').get(inference_context):

|

||||

INFERENCE_POOL_SET['cli'][inference_context] = INFERENCE_POOL_SET.get('ui').get(inference_context)

|

||||

if app_context == 'ui' and INFERENCE_POOL_SET.get('cli').get(inference_context):

|

||||

INFERENCE_POOL_SET['ui'][inference_context] = INFERENCE_POOL_SET.get('cli').get(inference_context)

|

||||

if app_context == 'cli' and INFERENCE_POOL_SET.get('api').get(inference_context):

|

||||

INFERENCE_POOL_SET['cli'][inference_context] = INFERENCE_POOL_SET.get('api').get(inference_context)

|

||||

if app_context == 'api' and INFERENCE_POOL_SET.get('cli').get(inference_context):

|

||||

INFERENCE_POOL_SET['api'][inference_context] = INFERENCE_POOL_SET.get('cli').get(inference_context)

|

||||

if not INFERENCE_POOL_SET.get(app_context).get(inference_context):

|

||||

INFERENCE_POOL_SET[app_context][inference_context] = create_inference_pool(model_source_set, execution_device_id, execution_providers)

|

||||

|

||||

|

||||

@@ -149,9 +149,6 @@ LOCALES : Locales =\

|

||||

'processors': 'load a single or multiple processors (choices: {choices}, ...)',

|

||||

'background-remover-model': 'choose the model responsible for removing the background',

|

||||

'background-remover-color': 'apply red, green blue and alpha values of the background',

|

||||

'open_browser': 'open the browser once the program is ready',

|

||||

'ui_layouts': 'launch a single or multiple UI layouts (choices: {choices}, ...)',

|

||||

'ui_workflow': 'choose the ui workflow',

|

||||

'download_providers': 'download using different providers (choices: {choices}, ...)',

|

||||

'download_scope': 'specify the download scope',

|

||||

'benchmark_mode': 'choose the benchmark mode',

|

||||

@@ -165,7 +162,6 @@ LOCALES : Locales =\

|

||||

'log_level': 'adjust the message severity displayed in the terminal',

|

||||

'halt_on_error': 'halt the program once an error occurred',

|

||||

'run': 'run the program',

|

||||

'headless_run': 'run the program in headless mode',

|

||||

'batch_run': 'run the program in batch mode',

|

||||

'force_download': 'force automate downloads and exit',

|

||||

'benchmark': 'benchmark the program',

|

||||

@@ -262,7 +258,6 @@ LOCALES : Locales =\

|

||||

'temp_frame_format_dropdown': 'TEMP FRAME FORMAT',

|

||||

'terminal_textbox': 'TERMINAL',

|

||||

'trim_frame_slider': 'TRIM FRAME',

|

||||

'ui_workflow': 'UI WORKFLOW',

|

||||

'video_memory_strategy_dropdown': 'VIDEO MEMORY STRATEGY',

|

||||

'webcam_fps_slider': 'WEBCAM FPS',

|

||||

'webcam_image': 'WEBCAM',

|

||||

|

||||

+1

-12

@@ -195,16 +195,6 @@ def create_processors_program() -> ArgumentParser:

|

||||

return program

|

||||

|

||||

|

||||

def create_uis_program() -> ArgumentParser:

|

||||

program = ArgumentParser(add_help = False)

|

||||

available_ui_layouts = [ get_file_name(file_path) for file_path in resolve_file_paths('facefusion/uis/layouts') ]

|

||||

group_uis = program.add_argument_group('uis')

|

||||

group_uis.add_argument('--open-browser', help = translator.get('help.open_browser'), action = 'store_true', default = config.get_bool_value('uis', 'open_browser'))

|

||||

group_uis.add_argument('--ui-layouts', help = translator.get('help.ui_layouts').format(choices = ', '.join(available_ui_layouts)), default = config.get_str_list('uis', 'ui_layouts', 'default'), choices = available_ui_layouts, nargs = '+', metavar = 'UI_LAYOUTS')

|

||||

group_uis.add_argument('--ui-workflow', help = translator.get('help.ui_workflow'), default = config.get_str_value('uis', 'ui_workflow', 'instant_runner'), choices = facefusion.choices.ui_workflows)

|

||||

return program

|

||||

|

||||

|

||||

def create_download_providers_program() -> ArgumentParser:

|

||||

program = ArgumentParser(add_help = False)

|

||||

group_download = program.add_argument_group('download')

|

||||

@@ -297,8 +287,7 @@ def create_program() -> ArgumentParser:

|

||||

program._positionals.title = 'commands'

|

||||

program.add_argument('-v', '--version', version = metadata.get('name') + ' ' + metadata.get('version'), action = 'version')

|

||||

sub_program = program.add_subparsers(dest = 'command')

|

||||

sub_program.add_parser('run', help = translator.get('help.run'), parents = [ create_config_path_program(), create_temp_path_program(), create_jobs_path_program(), create_source_paths_program(), create_target_path_program(), create_output_path_program(), collect_step_program(), create_uis_program(), create_benchmark_program(), collect_job_program() ], formatter_class = create_help_formatter_large)

|

||||

sub_program.add_parser('headless-run', help = translator.get('help.headless_run'), parents = [ create_config_path_program(), create_temp_path_program(), create_jobs_path_program(), create_source_paths_program(), create_target_path_program(), create_output_path_program(), collect_step_program(), collect_job_program() ], formatter_class = create_help_formatter_large)

|

||||

sub_program.add_parser('run', help = translator.get('help.run'), parents = [ create_config_path_program(), create_temp_path_program(), create_jobs_path_program(), create_source_paths_program(), create_target_path_program(), create_output_path_program(), collect_step_program(), collect_job_program() ], formatter_class = create_help_formatter_large)

|

||||

sub_program.add_parser('batch-run', help = translator.get('help.batch_run'), parents = [ create_config_path_program(), create_temp_path_program(), create_jobs_path_program(), create_source_pattern_program(), create_target_pattern_program(), create_output_pattern_program(), collect_step_program(), collect_job_program() ], formatter_class = create_help_formatter_large)

|

||||

sub_program.add_parser('force-download', help = translator.get('help.force_download'), parents = [ create_download_providers_program(), create_download_scope_program(), create_log_level_program() ], formatter_class = create_help_formatter_large)

|

||||

sub_program.add_parser('benchmark', help = translator.get('help.benchmark'), parents = [ create_temp_path_program(), collect_step_program(), create_benchmark_program(), collect_job_program() ], formatter_class = create_help_formatter_large)

|

||||

|

||||

@@ -7,7 +7,7 @@ from facefusion.types import State, StateKey, StateSet

|

||||

STATE_SET : Union[StateSet, ProcessorStateSet] =\

|

||||

{

|

||||

'cli': {}, #type:ignore[assignment]

|

||||

'ui': {} #type:ignore[assignment]

|

||||

'api': {} #type:ignore[assignment]

|

||||

}

|

||||

|

||||

|

||||

@@ -17,12 +17,12 @@ def get_state() -> Union[State, ProcessorState]:

|

||||

|

||||

|

||||

def sync_state() -> None:

|

||||

STATE_SET['cli'] = STATE_SET.get('ui') #type:ignore[assignment]

|

||||

STATE_SET['cli'] = STATE_SET.get('api') #type:ignore[assignment]

|

||||

|

||||

|

||||

def init_item(key : Union[StateKey, ProcessorStateKey], value : Any) -> None:

|

||||

STATE_SET['cli'][key] = value #type:ignore[literal-required]

|

||||

STATE_SET['ui'][key] = value #type:ignore[literal-required]

|

||||

STATE_SET['api'][key] = value #type:ignore[literal-required]

|

||||

|

||||

|

||||

def get_item(key : Union[StateKey, ProcessorStateKey]) -> Any:

|

||||

@@ -35,7 +35,7 @@ def set_item(key : Union[StateKey, ProcessorStateKey], value : Any) -> None:

|

||||

|

||||

|

||||

def sync_item(key : Union[StateKey, ProcessorStateKey]) -> None:

|

||||

STATE_SET['cli'][key] = STATE_SET.get('ui').get(key) #type:ignore[literal-required]

|

||||

STATE_SET['cli'][key] = STATE_SET.get('api').get(key) #type:ignore[literal-required]

|

||||

|

||||

|

||||

def clear_item(key : Union[StateKey, ProcessorStateKey]) -> None:

|

||||

|

||||

+1

-9

@@ -228,13 +228,11 @@ Download = TypedDict('Download',

|

||||

DownloadSet : TypeAlias = Dict[str, Download]

|

||||

|

||||

VideoMemoryStrategy = Literal['strict', 'moderate', 'tolerant']

|

||||

AppContext = Literal['cli', 'ui']

|

||||

AppContext = Literal['cli', 'api']

|

||||

|

||||

InferencePool : TypeAlias = Dict[str, InferenceSession]

|

||||

InferencePoolSet : TypeAlias = Dict[AppContext, Dict[str, InferencePool]]

|

||||

|

||||

UiWorkflow = Literal['instant_runner', 'job_runner', 'job_manager']

|

||||

|

||||

JobStore = TypedDict('JobStore',

|

||||

{

|

||||

'job_keys' : List[str],

|

||||

@@ -313,9 +311,6 @@ StateKey = Literal\

|

||||

'output_video_scale',

|

||||

'output_video_fps',

|

||||

'processors',

|

||||

'open_browser',

|

||||

'ui_layouts',

|

||||

'ui_workflow',

|

||||

'execution_device_ids',

|

||||

'execution_providers',

|

||||

'execution_thread_count',

|

||||

@@ -383,9 +378,6 @@ State = TypedDict('State',

|

||||

'output_video_scale' : Scale,

|

||||

'output_video_fps' : float,

|

||||

'processors' : List[str],

|

||||

'open_browser' : bool,

|

||||

'ui_layouts' : List[str],

|

||||

'ui_workflow' : UiWorkflow,

|

||||

'execution_device_ids' : List[int],

|

||||

'execution_providers' : List[ExecutionProvider],

|

||||

'execution_thread_count' : int,

|

||||

|

||||

@@ -1,163 +0,0 @@

|

||||

:root:root:root:root .gradio-container

|

||||

{

|

||||

overflow: unset;

|

||||

}

|

||||

|

||||

:root:root:root:root main

|

||||

{

|

||||

max-width: 110em;

|

||||

}

|

||||

|

||||

:root:root:root:root .tab-like-container input[type="number"]

|

||||

{

|

||||

border-radius: unset;

|

||||

text-align: center;

|

||||

order: 1;

|

||||

padding: unset

|

||||

}

|

||||

|

||||

:root:root:root:root input[type="number"]

|

||||

{

|

||||

appearance: textfield;

|

||||

}

|

||||

|

||||

:root:root:root:root input[type="number"]::-webkit-inner-spin-button

|

||||

{

|

||||

appearance: none;

|

||||

}

|

||||

|

||||

:root:root:root:root input[type="number"]:focus

|

||||

{

|

||||

outline: unset;

|

||||

}

|

||||

|

||||

:root:root:root:root .reset-button

|

||||

{

|

||||

background: var(--background-fill-secondary);

|

||||

border: unset;

|

||||

font-size: unset;

|

||||

padding: unset;

|

||||

}

|

||||

|

||||

:root:root:root:root [type="checkbox"],

|

||||

:root:root:root:root [type="radio"]

|

||||

{

|

||||

border-radius: 50%;

|

||||

height: 1.125rem;

|

||||

width: 1.125rem;

|

||||

}

|

||||

|

||||

:root:root:root:root input[type="range"]

|

||||

{

|

||||

background: transparent;

|

||||

}

|

||||

|

||||

:root:root:root:root input[type="range"]::-moz-range-thumb,

|

||||

:root:root:root:root input[type="range"]::-webkit-slider-thumb

|

||||

{

|

||||

background: var(--neutral-300);

|

||||

box-shadow: unset;

|

||||

border-radius: 50%;

|

||||

height: 1.125rem;

|

||||

width: 1.125rem;

|

||||

}

|

||||

|

||||

:root:root:root:root .thumbnail-item

|

||||

{

|

||||

border: unset;

|

||||

box-shadow: unset;

|

||||

}

|

||||

|

||||

:root:root:root:root .grid-wrap.fixed-height

|

||||

{

|

||||

min-height: unset;

|

||||

}

|

||||

|

||||

:root:root:root:root .box-face-selector .empty,

|

||||

:root:root:root:root .box-face-selector .gallery-container

|

||||

{

|

||||

min-height: 7.375rem;

|

||||

}

|

||||

|

||||

:root:root:root:root .tab-wrapper

|

||||

{

|

||||

padding: 0 0.625rem;

|

||||

}

|

||||

|

||||

:root:root:root:root .tab-container

|

||||

{

|

||||

gap: 0.5em;

|

||||

}

|

||||

|

||||

:root:root:root:root .tab-container button

|

||||

{

|

||||

background: unset;

|

||||

border-bottom: 0.125rem solid;

|

||||

}

|

||||

|

||||

:root:root:root:root .tab-container button.selected

|

||||

{

|

||||

color: var(--primary-500)

|

||||

}

|

||||

|

||||

:root:root:root:root .toast-body

|

||||

{

|

||||

background: white;

|

||||

color: var(--primary-500);

|

||||

border: unset;

|

||||

border-radius: unset;

|

||||

}

|

||||

|

||||

:root:root:root:root .dark .toast-body

|

||||

{

|

||||

background: var(--neutral-900);

|

||||

color: var(--primary-600);

|

||||

}

|

||||

|

||||

:root:root:root:root .toast-icon,

|

||||

:root:root:root:root .toast-title,

|

||||

:root:root:root:root .toast-text,

|

||||

:root:root:root:root .toast-close

|

||||

{

|

||||

color: unset;

|

||||

}

|

||||

|

||||

:root:root:root:root .toast-body .timer

|

||||

{

|

||||

background: currentColor;

|

||||

}

|

||||

|

||||

:root:root:root:root .slider_input_container > span,

|

||||

:root:root:root:root .feather-upload,

|

||||

:root:root:root:root footer

|

||||

{

|

||||

display: none;

|

||||

}

|

||||

|

||||

:root:root:root:root .image-frame

|

||||

{

|

||||

background-image: conic-gradient(#fff 90deg, #999 90deg 180deg, #fff 180deg 270deg, #999 270deg);

|

||||

background-size: 1.25rem 1.25rem;

|

||||

background-repeat: repeat;

|

||||

width: 100%;

|

||||

}

|

||||

|

||||

:root:root:root:root .image-frame > img

|

||||

{

|

||||

object-fit: cover;

|

||||

}

|

||||

|

||||

:root:root:root:root .image-preview.is-landscape

|

||||

{

|

||||

position: sticky;

|

||||

top: 0;

|

||||

z-index: 100;

|

||||

}

|

||||

|

||||

:root:root:root:root .block .error

|

||||

{

|

||||

border: 0.125rem solid;

|

||||

padding: 0.375rem 0.75rem;

|

||||

font-size: 0.75rem;

|

||||

text-transform: uppercase;

|

||||

}

|

||||

@@ -1,25 +0,0 @@

|

||||

from typing import Dict, List

|

||||

|

||||

from facefusion.types import Color, WebcamMode

|

||||

from facefusion.uis.types import JobManagerAction, JobRunnerAction, PreviewMode

|

||||

|

||||

job_manager_actions : List[JobManagerAction] = [ 'job-create', 'job-submit', 'job-delete', 'job-add-step', 'job-remix-step', 'job-insert-step', 'job-remove-step' ]

|

||||

job_runner_actions : List[JobRunnerAction] = [ 'job-run', 'job-run-all', 'job-retry', 'job-retry-all' ]

|

||||

|

||||

common_options : List[str] = [ 'keep-temp' ]

|

||||

|

||||

preview_modes : List[PreviewMode] = [ 'default', 'frame-by-frame', 'face-by-face' ]

|

||||

preview_resolutions : List[str] = [ '512x512', '768x768', '1024x1024' ]

|

||||

|

||||

webcam_modes : List[WebcamMode] = [ 'inline', 'udp', 'v4l2' ]

|

||||

webcam_resolutions : List[str] = [ '320x240', '640x480', '800x600', '1024x768', '1280x720', '1280x960', '1920x1080' ]

|

||||

|

||||

background_remover_fill_colors : Dict[str, Color] =\

|

||||

{

|

||||

'red' : (255, 0, 0, 255),

|

||||

'green' : (0, 255, 0, 255),

|

||||

'blue' : (0, 0, 255, 255),

|

||||

'black' : (0, 0, 0, 255),

|

||||

'white' : (255, 255, 255, 255),

|

||||

'alpha' : (0, 0, 0, 0)

|

||||

}

|

||||

@@ -1,41 +0,0 @@

|

||||

import random

|

||||

from typing import Optional

|

||||

|

||||

import gradio

|

||||

|

||||

from facefusion import metadata, translator

|

||||

|

||||

METADATA_BUTTON : Optional[gradio.Button] = None

|

||||

ACTION_BUTTON : Optional[gradio.Button] = None

|

||||

|

||||

|

||||

def render() -> None:

|

||||

global METADATA_BUTTON

|

||||

global ACTION_BUTTON

|

||||

|

||||

action = random.choice(

|

||||

[

|

||||

{

|

||||

'translator': translator.get('about.fund'),

|

||||

'url': 'https://fund.facefusion.io'

|

||||

},

|

||||

{

|

||||

'translator': translator.get('about.subscribe'),

|

||||

'url': 'https://subscribe.facefusion.io'

|

||||

},

|

||||

{

|

||||

'translator': translator.get('about.join'),

|

||||

'url': 'https://join.facefusion.io'

|

||||

}

|

||||

])

|

||||

|

||||

METADATA_BUTTON = gradio.Button(

|

||||

value = metadata.get('name') + ' ' + metadata.get('version'),

|

||||

variant = 'primary',

|

||||

link = metadata.get('url')

|

||||

)

|

||||

ACTION_BUTTON = gradio.Button(

|

||||

value = action.get('translator'),

|

||||

link = action.get('url'),

|

||||

size = 'sm'

|

||||

)

|

||||

@@ -1,64 +0,0 @@

|

||||

from typing import List, Optional, Tuple

|

||||

|

||||

import gradio

|

||||

|

||||

from facefusion import state_manager, translator

|

||||

from facefusion.common_helper import calculate_float_step

|

||||

from facefusion.processors.core import load_processor_module

|

||||

from facefusion.processors.modules.age_modifier import choices as age_modifier_choices

|

||||

from facefusion.processors.modules.age_modifier.types import AgeModifierModel

|

||||

from facefusion.uis.core import get_ui_component, register_ui_component

|

||||

|

||||

AGE_MODIFIER_MODEL_DROPDOWN : Optional[gradio.Dropdown] = None

|

||||

AGE_MODIFIER_DIRECTION_SLIDER : Optional[gradio.Slider] = None

|

||||

|

||||

|

||||

def render() -> None:

|

||||

global AGE_MODIFIER_MODEL_DROPDOWN

|

||||

global AGE_MODIFIER_DIRECTION_SLIDER

|

||||

|

||||

has_age_modifier = 'age_modifier' in state_manager.get_item('processors')

|

||||

AGE_MODIFIER_MODEL_DROPDOWN = gradio.Dropdown(

|

||||

label = translator.get('uis.model_dropdown', 'facefusion.processors.modules.age_modifier'),

|

||||

choices = age_modifier_choices.age_modifier_models,

|

||||

value = state_manager.get_item('age_modifier_model'),

|

||||

visible = has_age_modifier

|

||||

)

|

||||

AGE_MODIFIER_DIRECTION_SLIDER = gradio.Slider(

|

||||

label = translator.get('uis.direction_slider', 'facefusion.processors.modules.age_modifier'),

|

||||

value = state_manager.get_item('age_modifier_direction'),

|

||||

step = calculate_float_step(age_modifier_choices.age_modifier_direction_range),

|

||||

minimum = age_modifier_choices.age_modifier_direction_range[0],

|

||||

maximum = age_modifier_choices.age_modifier_direction_range[-1],

|

||||

visible = has_age_modifier

|

||||

)

|

||||

register_ui_component('age_modifier_model_dropdown', AGE_MODIFIER_MODEL_DROPDOWN)

|

||||

register_ui_component('age_modifier_direction_slider', AGE_MODIFIER_DIRECTION_SLIDER)

|

||||

|

||||

|

||||

def listen() -> None:

|

||||

AGE_MODIFIER_MODEL_DROPDOWN.change(update_age_modifier_model, inputs = AGE_MODIFIER_MODEL_DROPDOWN, outputs = AGE_MODIFIER_MODEL_DROPDOWN)

|

||||

AGE_MODIFIER_DIRECTION_SLIDER.release(update_age_modifier_direction, inputs = AGE_MODIFIER_DIRECTION_SLIDER)

|

||||

|

||||

processors_checkbox_group = get_ui_component('processors_checkbox_group')

|

||||

if processors_checkbox_group:

|

||||

processors_checkbox_group.change(remote_update, inputs = processors_checkbox_group, outputs = [ AGE_MODIFIER_MODEL_DROPDOWN, AGE_MODIFIER_DIRECTION_SLIDER ])

|

||||

|

||||

|

||||

def remote_update(processors : List[str]) -> Tuple[gradio.Dropdown, gradio.Slider]:

|

||||

has_age_modifier = 'age_modifier' in processors

|

||||

return gradio.Dropdown(visible = has_age_modifier), gradio.Slider(visible = has_age_modifier)

|

||||

|

||||

|

||||

def update_age_modifier_model(age_modifier_model : AgeModifierModel) -> gradio.Dropdown:

|

||||

age_modifier_module = load_processor_module('age_modifier')

|

||||

age_modifier_module.clear_inference_pool()

|

||||

state_manager.set_item('age_modifier_model', age_modifier_model)

|

||||

|

||||

if age_modifier_module.pre_check():

|

||||

return gradio.Dropdown(value = state_manager.get_item('age_modifier_model'))

|

||||

return gradio.Dropdown()

|

||||

|

||||

|

||||

def update_age_modifier_direction(age_modifier_direction : float) -> None:

|

||||

state_manager.set_item('age_modifier_direction', int(age_modifier_direction))

|

||||

@@ -1,165 +0,0 @@

|

||||

from typing import List, Optional, Tuple

|

||||

|

||||

import gradio

|

||||

|

||||

from facefusion import state_manager, translator

|

||||

from facefusion.common_helper import calculate_int_step

|

||||

from facefusion.processors.core import load_processor_module

|

||||

from facefusion.processors.modules.background_remover import choices as background_remover_choices

|

||||

from facefusion.processors.modules.background_remover.types import BackgroundRemoverModel

|

||||

from facefusion.sanitizer import sanitize_int_range

|

||||

from facefusion.uis.core import get_ui_component, register_ui_component

|

||||

|

||||

BACKGROUND_REMOVER_MODEL_DROPDOWN : Optional[gradio.Dropdown] = None

|

||||

BACKGROUND_REMOVER_FILL_COLOR_WRAPPER : Optional[gradio.Group] = None

|

||||

BACKGROUND_REMOVER_FILL_COLOR_RED_NUMBER : Optional[gradio.Number] = None

|

||||

BACKGROUND_REMOVER_FILL_COLOR_GREEN_NUMBER : Optional[gradio.Number] = None

|

||||

BACKGROUND_REMOVER_FILL_COLOR_BLUE_NUMBER : Optional[gradio.Number] = None

|

||||

BACKGROUND_REMOVER_FILL_COLOR_ALPHA_NUMBER : Optional[gradio.Number] = None

|

||||

BACKGROUND_REMOVER_DESPILL_COLOR_WRAPPER : Optional[gradio.Group] = None

|

||||

BACKGROUND_REMOVER_DESPILL_COLOR_RED_NUMBER : Optional[gradio.Number] = None

|

||||

BACKGROUND_REMOVER_DESPILL_COLOR_GREEN_NUMBER : Optional[gradio.Number] = None

|

||||

BACKGROUND_REMOVER_DESPILL_COLOR_BLUE_NUMBER : Optional[gradio.Number] = None

|

||||

BACKGROUND_REMOVER_DESPILL_COLOR_ALPHA_NUMBER : Optional[gradio.Number] = None

|

||||

|

||||

|

||||

def render() -> None:

|

||||

global BACKGROUND_REMOVER_MODEL_DROPDOWN

|

||||

global BACKGROUND_REMOVER_FILL_COLOR_WRAPPER

|

||||

global BACKGROUND_REMOVER_FILL_COLOR_RED_NUMBER

|

||||

global BACKGROUND_REMOVER_FILL_COLOR_GREEN_NUMBER

|

||||

global BACKGROUND_REMOVER_FILL_COLOR_BLUE_NUMBER

|

||||

global BACKGROUND_REMOVER_FILL_COLOR_ALPHA_NUMBER

|

||||

global BACKGROUND_REMOVER_DESPILL_COLOR_WRAPPER

|

||||

global BACKGROUND_REMOVER_DESPILL_COLOR_RED_NUMBER

|

||||

global BACKGROUND_REMOVER_DESPILL_COLOR_GREEN_NUMBER

|

||||

global BACKGROUND_REMOVER_DESPILL_COLOR_BLUE_NUMBER

|

||||

global BACKGROUND_REMOVER_DESPILL_COLOR_ALPHA_NUMBER

|

||||

|

||||

has_background_remover = 'background_remover' in state_manager.get_item('processors')

|

||||

background_remover_fill_color = state_manager.get_item('background_remover_fill_color')

|

||||

background_remover_despill_color = state_manager.get_item('background_remover_despill_color')

|

||||

BACKGROUND_REMOVER_MODEL_DROPDOWN = gradio.Dropdown(

|

||||

label = translator.get('uis.model_dropdown', 'facefusion.processors.modules.background_remover'),

|

||||

choices = background_remover_choices.background_remover_models,

|

||||

value = state_manager.get_item('background_remover_model'),

|

||||

visible = has_background_remover

|

||||

)

|

||||

with gradio.Group(visible = has_background_remover) as BACKGROUND_REMOVER_FILL_COLOR_WRAPPER:

|

||||

with gradio.Row():

|

||||

BACKGROUND_REMOVER_FILL_COLOR_RED_NUMBER = gradio.Number(

|

||||

label = translator.get('uis.fill_color_red_number', 'facefusion.processors.modules.background_remover'),

|

||||

value = background_remover_fill_color[0],

|

||||

minimum = background_remover_choices.background_remover_color_range[0],

|

||||

maximum = background_remover_choices.background_remover_color_range[-1],

|

||||

step = calculate_int_step(background_remover_choices.background_remover_color_range)

|

||||

)

|

||||

BACKGROUND_REMOVER_FILL_COLOR_GREEN_NUMBER = gradio.Number(

|

||||

label = translator.get('uis.fill_color_green_number', 'facefusion.processors.modules.background_remover'),

|

||||

value = background_remover_fill_color[1],

|

||||

minimum = background_remover_choices.background_remover_color_range[0],

|

||||

maximum = background_remover_choices.background_remover_color_range[-1],

|

||||

step = calculate_int_step(background_remover_choices.background_remover_color_range)

|

||||

)

|

||||

with gradio.Row():

|

||||

BACKGROUND_REMOVER_FILL_COLOR_BLUE_NUMBER = gradio.Number(

|

||||

label = translator.get('uis.fill_color_blue_number', 'facefusion.processors.modules.background_remover'),

|

||||

value = background_remover_fill_color[2],

|

||||

minimum = background_remover_choices.background_remover_color_range[0],

|

||||

maximum = background_remover_choices.background_remover_color_range[-1],

|

||||

step = calculate_int_step(background_remover_choices.background_remover_color_range)

|

||||

)

|

||||

BACKGROUND_REMOVER_FILL_COLOR_ALPHA_NUMBER = gradio.Number(

|

||||

label = translator.get('uis.fill_color_alpha_number', 'facefusion.processors.modules.background_remover'),

|

||||

value = background_remover_fill_color[3],

|

||||

minimum = background_remover_choices.background_remover_color_range[0],

|

||||

maximum = background_remover_choices.background_remover_color_range[-1],

|

||||

step = calculate_int_step(background_remover_choices.background_remover_color_range)

|

||||

)

|

||||

with gradio.Group(visible = has_background_remover) as BACKGROUND_REMOVER_DESPILL_COLOR_WRAPPER:

|

||||

with gradio.Row():

|

||||

BACKGROUND_REMOVER_DESPILL_COLOR_RED_NUMBER = gradio.Number(

|

||||

label = translator.get('uis.despill_color_red_number', 'facefusion.processors.modules.background_remover'),

|

||||

value = background_remover_despill_color[0],

|

||||

minimum = background_remover_choices.background_remover_color_range[0],

|

||||

maximum = background_remover_choices.background_remover_color_range[-1],

|

||||

step = calculate_int_step(background_remover_choices.background_remover_color_range)

|

||||

)

|

||||

BACKGROUND_REMOVER_DESPILL_COLOR_GREEN_NUMBER = gradio.Number(

|

||||

label = translator.get('uis.despill_color_green_number', 'facefusion.processors.modules.background_remover'),

|

||||

value = background_remover_despill_color[1],

|

||||

minimum = background_remover_choices.background_remover_color_range[0],

|

||||

maximum = background_remover_choices.background_remover_color_range[-1],

|

||||

step = calculate_int_step(background_remover_choices.background_remover_color_range)

|

||||

)

|

||||

with gradio.Row():

|

||||

BACKGROUND_REMOVER_DESPILL_COLOR_BLUE_NUMBER = gradio.Number(

|

||||

label = translator.get('uis.despill_color_blue_number', 'facefusion.processors.modules.background_remover'),

|

||||

value = background_remover_despill_color[2],

|

||||

minimum = background_remover_choices.background_remover_color_range[0],

|

||||

maximum = background_remover_choices.background_remover_color_range[-1],

|

||||

step = calculate_int_step(background_remover_choices.background_remover_color_range)

|

||||

)

|

||||

BACKGROUND_REMOVER_DESPILL_COLOR_ALPHA_NUMBER = gradio.Number(

|

||||

label = translator.get('uis.despill_color_alpha_number', 'facefusion.processors.modules.background_remover'),

|

||||

value = background_remover_despill_color[3],

|

||||

minimum = background_remover_choices.background_remover_color_range[0],

|

||||

maximum = background_remover_choices.background_remover_color_range[-1],

|

||||

step = calculate_int_step(background_remover_choices.background_remover_color_range)

|

||||

)

|

||||

register_ui_component('background_remover_model_dropdown', BACKGROUND_REMOVER_MODEL_DROPDOWN)

|

||||

register_ui_component('background_remover_fill_color_red_number', BACKGROUND_REMOVER_FILL_COLOR_RED_NUMBER)

|

||||

register_ui_component('background_remover_fill_color_green_number', BACKGROUND_REMOVER_FILL_COLOR_GREEN_NUMBER)

|

||||

register_ui_component('background_remover_fill_color_blue_number', BACKGROUND_REMOVER_FILL_COLOR_BLUE_NUMBER)

|

||||

register_ui_component('background_remover_fill_color_alpha_number', BACKGROUND_REMOVER_FILL_COLOR_ALPHA_NUMBER)

|

||||

register_ui_component('background_remover_despill_color_red_number', BACKGROUND_REMOVER_DESPILL_COLOR_RED_NUMBER)

|

||||

register_ui_component('background_remover_despill_color_green_number', BACKGROUND_REMOVER_DESPILL_COLOR_GREEN_NUMBER)

|

||||

register_ui_component('background_remover_despill_color_blue_number', BACKGROUND_REMOVER_DESPILL_COLOR_BLUE_NUMBER)

|

||||

register_ui_component('background_remover_despill_color_alpha_number', BACKGROUND_REMOVER_DESPILL_COLOR_ALPHA_NUMBER)

|

||||

|

||||

|

||||

def listen() -> None:

|

||||

BACKGROUND_REMOVER_MODEL_DROPDOWN.change(update_background_remover_model, inputs = BACKGROUND_REMOVER_MODEL_DROPDOWN, outputs = BACKGROUND_REMOVER_MODEL_DROPDOWN)

|

||||

background_remover_fill_color_inputs = [ BACKGROUND_REMOVER_FILL_COLOR_RED_NUMBER, BACKGROUND_REMOVER_FILL_COLOR_GREEN_NUMBER, BACKGROUND_REMOVER_FILL_COLOR_BLUE_NUMBER, BACKGROUND_REMOVER_FILL_COLOR_ALPHA_NUMBER ]

|

||||

background_remover_despill_color_inputs = [ BACKGROUND_REMOVER_DESPILL_COLOR_RED_NUMBER, BACKGROUND_REMOVER_DESPILL_COLOR_GREEN_NUMBER, BACKGROUND_REMOVER_DESPILL_COLOR_BLUE_NUMBER, BACKGROUND_REMOVER_DESPILL_COLOR_ALPHA_NUMBER ]

|

||||

|

||||

for background_remover_fill_color_input in background_remover_fill_color_inputs:

|

||||

background_remover_fill_color_input.change(update_background_remover_fill_color, inputs = background_remover_fill_color_inputs)

|

||||

|

||||

for background_remover_despill_color_input in background_remover_despill_color_inputs:

|

||||

background_remover_despill_color_input.change(update_background_remover_despill_color, inputs = background_remover_despill_color_inputs)

|

||||

|

||||

processors_checkbox_group = get_ui_component('processors_checkbox_group')

|

||||

if processors_checkbox_group:

|

||||

processors_checkbox_group.change(remote_update, inputs = processors_checkbox_group, outputs = [ BACKGROUND_REMOVER_MODEL_DROPDOWN, BACKGROUND_REMOVER_FILL_COLOR_WRAPPER, BACKGROUND_REMOVER_DESPILL_COLOR_WRAPPER ])

|

||||

|

||||

|

||||

def remote_update(processors : List[str]) -> Tuple[gradio.Dropdown, gradio.Group, gradio.Group]:

|

||||

has_background_remover = 'background_remover' in processors

|

||||

return gradio.Dropdown(visible = has_background_remover), gradio.Group(visible = has_background_remover), gradio.Group(visible = has_background_remover)

|

||||

|

||||

|

||||

def update_background_remover_model(background_remover_model : BackgroundRemoverModel) -> gradio.Dropdown:

|

||||

background_remover_module = load_processor_module('background_remover')

|

||||

background_remover_module.clear_inference_pool()

|

||||

state_manager.set_item('background_remover_model', background_remover_model)

|

||||

|

||||

if background_remover_module.pre_check():

|

||||

return gradio.Dropdown(value = state_manager.get_item('background_remover_model'))

|

||||

return gradio.Dropdown()

|

||||

|

||||

|

||||

def update_background_remover_fill_color(red : int, green : int, blue : int, alpha : int) -> None:

|

||||

red = sanitize_int_range(red, background_remover_choices.background_remover_color_range)

|

||||

green = sanitize_int_range(green, background_remover_choices.background_remover_color_range)

|

||||

blue = sanitize_int_range(blue, background_remover_choices.background_remover_color_range)

|

||||

alpha = sanitize_int_range(alpha, background_remover_choices.background_remover_color_range)

|

||||

state_manager.set_item('background_remover_fill_color', (red, green, blue, alpha))

|

||||

|

||||

|

||||

def update_background_remover_despill_color(red : int, green : int, blue : int, alpha : int) -> None:

|

||||

red = sanitize_int_range(red, background_remover_choices.background_remover_color_range)

|

||||

green = sanitize_int_range(green, background_remover_choices.background_remover_color_range)

|

||||

blue = sanitize_int_range(blue, background_remover_choices.background_remover_color_range)

|

||||

alpha = sanitize_int_range(alpha, background_remover_choices.background_remover_color_range)

|

||||

state_manager.set_item('background_remover_despill_color', (red, green, blue, alpha))

|

||||

@@ -1,51 +0,0 @@

|

||||

from typing import Any, Iterator, List, Optional

|

||||

|

||||

import gradio

|

||||

|

||||

from facefusion import benchmarker, state_manager, translator

|

||||

|

||||

BENCHMARK_BENCHMARKS_DATAFRAME : Optional[gradio.Dataframe] = None

|

||||

BENCHMARK_START_BUTTON : Optional[gradio.Button] = None

|

||||

|

||||

|

||||

def render() -> None:

|

||||

global BENCHMARK_BENCHMARKS_DATAFRAME

|

||||

global BENCHMARK_START_BUTTON

|

||||

|

||||

BENCHMARK_BENCHMARKS_DATAFRAME = gradio.Dataframe(

|

||||

headers =

|

||||

[

|

||||

'target_path',

|

||||

'cycle_count',

|

||||

'average_run',

|

||||

'fastest_run',

|

||||

'slowest_run',

|

||||

'relative_fps'

|

||||

],

|

||||

datatype =

|

||||

[

|

||||

'str',

|

||||

'number',

|

||||

'number',

|

||||

'number',

|

||||

'number',

|

||||

'number'

|

||||

],

|

||||

show_label = False

|

||||

)

|

||||

BENCHMARK_START_BUTTON = gradio.Button(

|

||||

value = translator.get('uis.start_button'),

|

||||

variant = 'primary',

|

||||

size = 'sm'

|

||||

)

|

||||

|

||||

|

||||

def listen() -> None:

|

||||

BENCHMARK_START_BUTTON.click(start, outputs = BENCHMARK_BENCHMARKS_DATAFRAME)

|

||||

|

||||

|

||||

def start() -> Iterator[List[Any]]:

|

||||

state_manager.sync_state()

|

||||

|

||||

for benchmark in benchmarker.run():

|

||||

yield [ list(benchmark_set.values()) for benchmark_set in benchmark ]

|

||||

|

||||

@@ -1,54 +0,0 @@

|

||||

from typing import List, Optional

|

||||

|

||||

import gradio

|

||||

|

||||

import facefusion.choices

|

||||

from facefusion import state_manager, translator

|

||||

from facefusion.common_helper import calculate_int_step

|

||||

from facefusion.types import BenchmarkMode, BenchmarkResolution

|

||||

|

||||

BENCHMARK_MODE_DROPDOWN : Optional[gradio.Dropdown] = None

|

||||

BENCHMARK_RESOLUTIONS_CHECKBOX_GROUP : Optional[gradio.CheckboxGroup] = None

|

||||

BENCHMARK_CYCLE_COUNT_SLIDER : Optional[gradio.Button] = None

|

||||

|

||||

|

||||

def render() -> None:

|

||||

global BENCHMARK_MODE_DROPDOWN

|

||||

global BENCHMARK_RESOLUTIONS_CHECKBOX_GROUP

|

||||

global BENCHMARK_CYCLE_COUNT_SLIDER

|

||||

|

||||

BENCHMARK_MODE_DROPDOWN = gradio.Dropdown(

|

||||

label = translator.get('uis.benchmark_mode_dropdown'),

|

||||

choices = facefusion.choices.benchmark_modes,

|

||||

value = state_manager.get_item('benchmark_mode')

|

||||

)

|

||||

BENCHMARK_RESOLUTIONS_CHECKBOX_GROUP = gradio.CheckboxGroup(

|

||||

label = translator.get('uis.benchmark_resolutions_checkbox_group'),

|

||||

choices = facefusion.choices.benchmark_resolutions,

|

||||

value = state_manager.get_item('benchmark_resolutions')

|

||||

)

|

||||

BENCHMARK_CYCLE_COUNT_SLIDER = gradio.Slider(

|

||||

label = translator.get('uis.benchmark_cycle_count_slider'),

|

||||

value = state_manager.get_item('benchmark_cycle_count'),

|

||||

step = calculate_int_step(facefusion.choices.benchmark_cycle_count_range),

|

||||

minimum = facefusion.choices.benchmark_cycle_count_range[0],

|

||||

maximum = facefusion.choices.benchmark_cycle_count_range[-1]

|

||||

)

|

||||

|

||||

|

||||

def listen() -> None:

|

||||

BENCHMARK_MODE_DROPDOWN.change(update_benchmark_mode, inputs = BENCHMARK_MODE_DROPDOWN)

|

||||

BENCHMARK_RESOLUTIONS_CHECKBOX_GROUP.change(update_benchmark_resolutions, inputs = BENCHMARK_RESOLUTIONS_CHECKBOX_GROUP)

|

||||

BENCHMARK_CYCLE_COUNT_SLIDER.release(update_benchmark_cycle_count, inputs = BENCHMARK_CYCLE_COUNT_SLIDER)

|

||||

|

||||

|

||||

def update_benchmark_mode(benchmark_mode : BenchmarkMode) -> None:

|

||||

state_manager.set_item('benchmark_mode', benchmark_mode)

|

||||

|

||||

|

||||

def update_benchmark_resolutions(benchmark_resolutions : List[BenchmarkResolution]) -> None:

|

||||

state_manager.set_item('benchmark_resolutions', benchmark_resolutions)

|

||||

|

||||

|

||||

def update_benchmark_cycle_count(benchmark_cycle_count : int) -> None:

|

||||

state_manager.set_item('benchmark_cycle_count', benchmark_cycle_count)

|

||||

@@ -1,32 +0,0 @@

|

||||

from typing import List, Optional

|

||||

|

||||

import gradio

|

||||

|

||||

from facefusion import state_manager, translator

|

||||

from facefusion.uis import choices as uis_choices

|

||||

|

||||

COMMON_OPTIONS_CHECKBOX_GROUP : Optional[gradio.Checkboxgroup] = None

|

||||

|

||||

|

||||

def render() -> None:

|

||||

global COMMON_OPTIONS_CHECKBOX_GROUP

|

||||

|

||||

common_options = []

|

||||

|

||||

if state_manager.get_item('keep_temp'):

|

||||

common_options.append('keep-temp')

|

||||

|

||||

COMMON_OPTIONS_CHECKBOX_GROUP = gradio.Checkboxgroup(

|

||||

label = translator.get('uis.common_options_checkbox_group'),

|

||||

choices = uis_choices.common_options,

|

||||

value = common_options

|

||||

)

|

||||

|

||||

|

||||

def listen() -> None:

|

||||

COMMON_OPTIONS_CHECKBOX_GROUP.change(update, inputs = COMMON_OPTIONS_CHECKBOX_GROUP)

|

||||

|

||||

|

||||

def update(common_options : List[str]) -> None:

|

||||

keep_temp = 'keep-temp' in common_options

|

||||

state_manager.set_item('keep_temp', keep_temp)

|

||||

@@ -1,64 +0,0 @@

|

||||

from typing import List, Optional, Tuple

|

||||

|

||||

import gradio

|

||||

|

||||

from facefusion import state_manager, translator

|

||||

from facefusion.common_helper import calculate_int_step

|

||||

from facefusion.processors.core import load_processor_module

|

||||

from facefusion.processors.modules.deep_swapper import choices as deep_swapper_choices

|

||||

from facefusion.processors.modules.deep_swapper.types import DeepSwapperModel

|

||||

from facefusion.uis.core import get_ui_component, register_ui_component

|

||||

|

||||

DEEP_SWAPPER_MODEL_DROPDOWN : Optional[gradio.Dropdown] = None

|

||||

DEEP_SWAPPER_MORPH_SLIDER : Optional[gradio.Slider] = None

|

||||

|

||||

|

||||

def render() -> None:

|

||||

global DEEP_SWAPPER_MODEL_DROPDOWN

|

||||

global DEEP_SWAPPER_MORPH_SLIDER

|

||||

|

||||

has_deep_swapper = 'deep_swapper' in state_manager.get_item('processors')

|

||||

DEEP_SWAPPER_MODEL_DROPDOWN = gradio.Dropdown(

|

||||

label = translator.get('uis.model_dropdown', 'facefusion.processors.modules.deep_swapper'),

|

||||

choices = deep_swapper_choices.deep_swapper_models,

|

||||

value = state_manager.get_item('deep_swapper_model'),

|

||||

visible = has_deep_swapper

|

||||

)

|

||||

DEEP_SWAPPER_MORPH_SLIDER = gradio.Slider(

|

||||

label = translator.get('uis.morph_slider', 'facefusion.processors.modules.deep_swapper'),

|

||||

value = state_manager.get_item('deep_swapper_morph'),

|

||||

step = calculate_int_step(deep_swapper_choices.deep_swapper_morph_range),

|

||||

minimum = deep_swapper_choices.deep_swapper_morph_range[0],

|

||||

maximum = deep_swapper_choices.deep_swapper_morph_range[-1],

|

||||

visible = has_deep_swapper and load_processor_module('deep_swapper').get_inference_pool() and load_processor_module('deep_swapper').has_morph_input()

|

||||

)

|

||||

register_ui_component('deep_swapper_model_dropdown', DEEP_SWAPPER_MODEL_DROPDOWN)

|

||||

register_ui_component('deep_swapper_morph_slider', DEEP_SWAPPER_MORPH_SLIDER)

|

||||

|

||||

|

||||

def listen() -> None:

|

||||

DEEP_SWAPPER_MODEL_DROPDOWN.change(update_deep_swapper_model, inputs = DEEP_SWAPPER_MODEL_DROPDOWN, outputs = [ DEEP_SWAPPER_MODEL_DROPDOWN, DEEP_SWAPPER_MORPH_SLIDER ])

|

||||

DEEP_SWAPPER_MORPH_SLIDER.release(update_deep_swapper_morph, inputs = DEEP_SWAPPER_MORPH_SLIDER)

|

||||

|

||||

processors_checkbox_group = get_ui_component('processors_checkbox_group')

|

||||

if processors_checkbox_group:

|

||||

processors_checkbox_group.change(remote_update, inputs = processors_checkbox_group, outputs = [ DEEP_SWAPPER_MODEL_DROPDOWN, DEEP_SWAPPER_MORPH_SLIDER ])

|

||||

|

||||

|

||||

def remote_update(processors : List[str]) -> Tuple[gradio.Dropdown, gradio.Slider]:

|

||||

has_deep_swapper = 'deep_swapper' in processors

|

||||

return gradio.Dropdown(visible = has_deep_swapper), gradio.Slider(visible = has_deep_swapper and load_processor_module('deep_swapper').get_inference_pool() and load_processor_module('deep_swapper').has_morph_input())

|

||||

|

||||

|

||||

def update_deep_swapper_model(deep_swapper_model : DeepSwapperModel) -> Tuple[gradio.Dropdown, gradio.Slider]:

|

||||

deep_swapper_module = load_processor_module('deep_swapper')

|

||||

deep_swapper_module.clear_inference_pool()

|

||||

state_manager.set_item('deep_swapper_model', deep_swapper_model)

|

||||

|

||||

if deep_swapper_module.pre_check():

|

||||

return gradio.Dropdown(value = state_manager.get_item('deep_swapper_model')), gradio.Slider(visible = deep_swapper_module.has_morph_input())

|

||||

return gradio.Dropdown(), gradio.Slider()

|

||||

|

||||

|

||||

def update_deep_swapper_morph(deep_swapper_morph : int) -> None:

|

||||

state_manager.set_item('deep_swapper_morph', deep_swapper_morph)

|

||||

@@ -1,48 +0,0 @@

|

||||

from typing import List, Optional

|

||||

|

||||

import gradio

|

||||

|

||||

import facefusion.choices

|

||||

from facefusion import content_analyser, face_classifier, face_detector, face_landmarker, face_masker, face_recognizer, state_manager, translator, voice_extractor

|

||||

from facefusion.filesystem import get_file_name, resolve_file_paths

|

||||

from facefusion.processors.core import get_processors_modules

|

||||

from facefusion.types import DownloadProvider

|

||||

|

||||

DOWNLOAD_PROVIDERS_CHECKBOX_GROUP : Optional[gradio.CheckboxGroup] = None

|

||||

|

||||

|

||||

def render() -> None:

|

||||

global DOWNLOAD_PROVIDERS_CHECKBOX_GROUP

|

||||

|

||||

DOWNLOAD_PROVIDERS_CHECKBOX_GROUP = gradio.CheckboxGroup(

|

||||

label = translator.get('uis.download_providers_checkbox_group'),

|

||||

choices = facefusion.choices.download_providers,

|

||||

value = state_manager.get_item('download_providers')

|

||||

)

|

||||

|

||||

|

||||

def listen() -> None:

|

||||

DOWNLOAD_PROVIDERS_CHECKBOX_GROUP.change(update_download_providers, inputs = DOWNLOAD_PROVIDERS_CHECKBOX_GROUP, outputs = DOWNLOAD_PROVIDERS_CHECKBOX_GROUP)

|

||||

|

||||

|

||||

def update_download_providers(download_providers : List[DownloadProvider]) -> gradio.CheckboxGroup:

|

||||

common_modules =\

|

||||

[

|

||||

content_analyser,

|

||||

face_classifier,

|

||||

face_detector,

|

||||

face_landmarker,

|

||||

face_recognizer,

|

||||

face_masker,

|

||||

voice_extractor

|

||||

]

|

||||

available_processors = [ get_file_name(file_path) for file_path in resolve_file_paths('facefusion/processors/modules') ]

|

||||

processor_modules = get_processors_modules(available_processors)

|

||||

|

||||

for module in common_modules + processor_modules:

|

||||

if hasattr(module, 'create_static_model_set'):

|

||||

module.create_static_model_set.cache_clear()

|

||||

|

||||

download_providers = download_providers or facefusion.choices.download_providers

|

||||

state_manager.set_item('download_providers', download_providers)

|

||||

return gradio.CheckboxGroup(value = state_manager.get_item('download_providers'))

|

||||

@@ -1,48 +0,0 @@

|

||||

from typing import List, Optional

|

||||

|

||||

import gradio

|

||||

|

||||

from facefusion import content_analyser, face_classifier, face_detector, face_landmarker, face_masker, face_recognizer, state_manager, translator, voice_extractor

|

||||

from facefusion.execution import get_available_execution_providers

|

||||

from facefusion.filesystem import get_file_name, resolve_file_paths

|

||||

from facefusion.processors.core import get_processors_modules

|

||||

from facefusion.types import ExecutionProvider

|

||||

|

||||

EXECUTION_PROVIDERS_CHECKBOX_GROUP : Optional[gradio.CheckboxGroup] = None

|

||||

|

||||

|

||||

def render() -> None:

|

||||

global EXECUTION_PROVIDERS_CHECKBOX_GROUP

|

||||

|

||||

EXECUTION_PROVIDERS_CHECKBOX_GROUP = gradio.CheckboxGroup(

|

||||

label = translator.get('uis.execution_providers_checkbox_group'),

|

||||

choices = get_available_execution_providers(),

|

||||

value = state_manager.get_item('execution_providers')

|

||||

)

|

||||

|

||||

|

||||

def listen() -> None:

|

||||

EXECUTION_PROVIDERS_CHECKBOX_GROUP.change(update_execution_providers, inputs = EXECUTION_PROVIDERS_CHECKBOX_GROUP, outputs = EXECUTION_PROVIDERS_CHECKBOX_GROUP)

|

||||

|

||||

|

||||

def update_execution_providers(execution_providers : List[ExecutionProvider]) -> gradio.CheckboxGroup:

|

||||

common_modules =\

|

||||

[

|

||||

content_analyser,

|

||||

face_classifier,

|

||||

face_detector,

|

||||

face_landmarker,

|

||||

face_masker,

|

||||

face_recognizer,

|

||||

voice_extractor

|

||||

]

|

||||

available_processors = [ get_file_name(file_path) for file_path in resolve_file_paths('facefusion/processors/modules') ]

|

||||

processor_modules = get_processors_modules(available_processors)

|

||||

|

||||

for module in common_modules + processor_modules:

|

||||

if hasattr(module, 'clear_inference_pool'):

|

||||

module.clear_inference_pool()

|

||||

|

||||

execution_providers = execution_providers or get_available_execution_providers()

|

||||

state_manager.set_item('execution_providers', execution_providers)

|

||||

return gradio.CheckboxGroup(value = state_manager.get_item('execution_providers'))

|

||||

@@ -1,29 +0,0 @@

|

||||

from typing import Optional

|

||||

|

||||

import gradio

|

||||

|

||||

import facefusion.choices

|

||||

from facefusion import state_manager, translator

|

||||

from facefusion.common_helper import calculate_int_step

|

||||

|

||||

EXECUTION_THREAD_COUNT_SLIDER : Optional[gradio.Slider] = None

|

||||

|

||||

|

||||

def render() -> None:

|

||||

global EXECUTION_THREAD_COUNT_SLIDER

|

||||

|

||||

EXECUTION_THREAD_COUNT_SLIDER = gradio.Slider(

|

||||

label = translator.get('uis.execution_thread_count_slider'),

|

||||

value = state_manager.get_item('execution_thread_count'),

|

||||

step = calculate_int_step(facefusion.choices.execution_thread_count_range),

|

||||

minimum = facefusion.choices.execution_thread_count_range[0],

|

||||

maximum = facefusion.choices.execution_thread_count_range[-1]

|

||||

)

|

||||

|

||||

|

||||

def listen() -> None:

|

||||

EXECUTION_THREAD_COUNT_SLIDER.release(update_execution_thread_count, inputs = EXECUTION_THREAD_COUNT_SLIDER)

|

||||

|

||||

|

||||

def update_execution_thread_count(execution_thread_count : float) -> None:

|

||||

state_manager.set_item('execution_thread_count', int(execution_thread_count))

|

||||

@@ -1,80 +0,0 @@

|

||||

from typing import List, Optional, Tuple

|

||||

|

||||

import gradio

|

||||

|

||||

from facefusion import state_manager, translator

|

||||

from facefusion.common_helper import calculate_float_step

|

||||

from facefusion.processors.core import load_processor_module

|

||||

from facefusion.processors.modules.expression_restorer import choices as expression_restorer_choices

|

||||

from facefusion.processors.modules.expression_restorer.types import ExpressionRestorerArea, ExpressionRestorerModel

|

||||

from facefusion.uis.core import get_ui_component, register_ui_component

|

||||

|

||||

EXPRESSION_RESTORER_MODEL_DROPDOWN : Optional[gradio.Dropdown] = None

|

||||

EXPRESSION_RESTORER_FACTOR_SLIDER : Optional[gradio.Slider] = None

|

||||

EXPRESSION_RESTORER_AREAS_CHECKBOX_GROUP : Optional[gradio.CheckboxGroup] = None

|

||||

|

||||

|

||||

def render() -> None:

|

||||

global EXPRESSION_RESTORER_MODEL_DROPDOWN

|

||||

global EXPRESSION_RESTORER_FACTOR_SLIDER

|

||||

global EXPRESSION_RESTORER_AREAS_CHECKBOX_GROUP

|

||||

|

||||

has_expression_restorer = 'expression_restorer' in state_manager.get_item('processors')

|

||||

EXPRESSION_RESTORER_MODEL_DROPDOWN = gradio.Dropdown(

|

||||

label = translator.get('uis.model_dropdown', 'facefusion.processors.modules.expression_restorer'),

|

||||

choices = expression_restorer_choices.expression_restorer_models,

|

||||

value = state_manager.get_item('expression_restorer_model'),

|

||||

visible = has_expression_restorer

|

||||

)

|

||||

EXPRESSION_RESTORER_FACTOR_SLIDER = gradio.Slider(

|

||||

label = translator.get('uis.factor_slider', 'facefusion.processors.modules.expression_restorer'),

|

||||

value = state_manager.get_item('expression_restorer_factor'),

|

||||

step = calculate_float_step(expression_restorer_choices.expression_restorer_factor_range),

|

||||

minimum = expression_restorer_choices.expression_restorer_factor_range[0],

|

||||

maximum = expression_restorer_choices.expression_restorer_factor_range[-1],

|

||||

visible = has_expression_restorer

|

||||

)

|

||||

EXPRESSION_RESTORER_AREAS_CHECKBOX_GROUP = gradio.CheckboxGroup(

|

||||

label = translator.get('uis.areas_checkbox_group', 'facefusion.processors.modules.expression_restorer'),

|

||||

choices = expression_restorer_choices.expression_restorer_areas,

|

||||

value = state_manager.get_item('expression_restorer_areas'),

|

||||

visible = has_expression_restorer

|

||||

)

|

||||

register_ui_component('expression_restorer_model_dropdown', EXPRESSION_RESTORER_MODEL_DROPDOWN)

|

||||

register_ui_component('expression_restorer_factor_slider', EXPRESSION_RESTORER_FACTOR_SLIDER)

|

||||

register_ui_component('expression_restorer_areas_checkbox_group', EXPRESSION_RESTORER_AREAS_CHECKBOX_GROUP)

|

||||

|

||||

|

||||

def listen() -> None:

|

||||

EXPRESSION_RESTORER_MODEL_DROPDOWN.change(update_expression_restorer_model, inputs = EXPRESSION_RESTORER_MODEL_DROPDOWN, outputs = EXPRESSION_RESTORER_MODEL_DROPDOWN)

|

||||

EXPRESSION_RESTORER_FACTOR_SLIDER.release(update_expression_restorer_factor, inputs = EXPRESSION_RESTORER_FACTOR_SLIDER)

|

||||

EXPRESSION_RESTORER_AREAS_CHECKBOX_GROUP.change(update_expression_restorer_areas, inputs = EXPRESSION_RESTORER_AREAS_CHECKBOX_GROUP, outputs = EXPRESSION_RESTORER_AREAS_CHECKBOX_GROUP)

|

||||

|

||||

processors_checkbox_group = get_ui_component('processors_checkbox_group')

|

||||

if processors_checkbox_group:

|

||||

processors_checkbox_group.change(remote_update, inputs = processors_checkbox_group, outputs = [ EXPRESSION_RESTORER_MODEL_DROPDOWN, EXPRESSION_RESTORER_FACTOR_SLIDER, EXPRESSION_RESTORER_AREAS_CHECKBOX_GROUP ])

|

||||

|

||||

|

||||

def remote_update(processors : List[str]) -> Tuple[gradio.Dropdown, gradio.Slider, gradio.CheckboxGroup]:

|

||||

has_expression_restorer = 'expression_restorer' in processors

|

||||

return gradio.Dropdown(visible = has_expression_restorer), gradio.Slider(visible = has_expression_restorer), gradio.CheckboxGroup(visible = has_expression_restorer)

|

||||

|

||||

|

||||

def update_expression_restorer_model(expression_restorer_model : ExpressionRestorerModel) -> gradio.Dropdown:

|

||||

expression_restorer_module = load_processor_module('expression_restorer')

|

||||

expression_restorer_module.clear_inference_pool()

|

||||

state_manager.set_item('expression_restorer_model', expression_restorer_model)

|

||||

|

||||

if expression_restorer_module.pre_check():

|

||||

return gradio.Dropdown(value = state_manager.get_item('expression_restorer_model'))

|

||||

return gradio.Dropdown()

|

||||

|

||||

|

||||

def update_expression_restorer_factor(expression_restorer_factor : float) -> None:

|

||||

state_manager.set_item('expression_restorer_factor', int(expression_restorer_factor))

|

||||

|

||||

|

||||

def update_expression_restorer_areas(expression_restorer_areas : List[ExpressionRestorerArea]) -> gradio.CheckboxGroup:

|

||||

expression_restorer_areas = expression_restorer_areas or expression_restorer_choices.expression_restorer_areas

|

||||

state_manager.set_item('expression_restorer_areas', expression_restorer_areas)

|

||||

return gradio.CheckboxGroup(value = state_manager.get_item('expression_restorer_areas'))

|

||||

@@ -1,40 +0,0 @@

|

||||

from typing import List, Optional

|

||||

|

||||

import gradio

|

||||

|

||||

from facefusion import state_manager, translator

|

||||

from facefusion.processors.modules.face_debugger import choices as face_debugger_choices

|

||||

from facefusion.processors.modules.face_debugger.types import FaceDebuggerItem

|

||||

from facefusion.uis.core import get_ui_component, register_ui_component

|

||||

|

||||

FACE_DEBUGGER_ITEMS_CHECKBOX_GROUP : Optional[gradio.CheckboxGroup] = None

|

||||

|

||||

|

||||

def render() -> None:

|

||||

global FACE_DEBUGGER_ITEMS_CHECKBOX_GROUP

|

||||

|

||||

has_face_debugger = 'face_debugger' in state_manager.get_item('processors')

|

||||

FACE_DEBUGGER_ITEMS_CHECKBOX_GROUP = gradio.CheckboxGroup(

|

||||

label = translator.get('uis.items_checkbox_group', 'facefusion.processors.modules.face_debugger'),

|

||||

choices = face_debugger_choices.face_debugger_items,

|

||||

value = state_manager.get_item('face_debugger_items'),

|

||||

visible = has_face_debugger

|

||||

)

|

||||

register_ui_component('face_debugger_items_checkbox_group', FACE_DEBUGGER_ITEMS_CHECKBOX_GROUP)

|

||||

|

||||

|

||||

def listen() -> None:

|

||||

FACE_DEBUGGER_ITEMS_CHECKBOX_GROUP.change(update_face_debugger_items, inputs = FACE_DEBUGGER_ITEMS_CHECKBOX_GROUP)

|

||||

|

||||

processors_checkbox_group = get_ui_component('processors_checkbox_group')

|

||||

if processors_checkbox_group:

|

||||

processors_checkbox_group.change(remote_update, inputs = processors_checkbox_group, outputs = FACE_DEBUGGER_ITEMS_CHECKBOX_GROUP)

|

||||

|

||||

|

||||

def remote_update(processors : List[str]) -> gradio.CheckboxGroup:

|

||||

has_face_debugger = 'face_debugger' in processors

|

||||

return gradio.CheckboxGroup(visible = has_face_debugger)

|

||||

|

||||

|

||||

def update_face_debugger_items(face_debugger_items : List[FaceDebuggerItem]) -> None:

|

||||

state_manager.set_item('face_debugger_items', face_debugger_items)

|

||||

@@ -1,103 +0,0 @@

|

||||

from typing import Optional, Sequence, Tuple

|

||||

|

||||

import gradio

|

||||

|

||||

import facefusion.choices

|

||||

from facefusion import face_detector, state_manager, translator

|

||||

from facefusion.common_helper import calculate_float_step, get_last

|

||||

from facefusion.sanitizer import sanitize_int_range

|

||||

from facefusion.types import Angle, FaceDetectorModel, Score

|

||||

from facefusion.uis.core import register_ui_component

|

||||

from facefusion.uis.types import ComponentOptions

|

||||

|

||||

FACE_DETECTOR_MODEL_DROPDOWN : Optional[gradio.Dropdown] = None

|

||||

FACE_DETECTOR_SIZE_DROPDOWN : Optional[gradio.Dropdown] = None

|

||||

FACE_DETECTOR_MARGIN_SLIDER : Optional[gradio.Slider] = None

|

||||

FACE_DETECTOR_ANGLES_CHECKBOX_GROUP : Optional[gradio.CheckboxGroup] = None

|

||||

FACE_DETECTOR_SCORE_SLIDER : Optional[gradio.Slider] = None

|

||||

|

||||

|

||||

def render() -> None:

|

||||

global FACE_DETECTOR_MODEL_DROPDOWN

|

||||

global FACE_DETECTOR_SIZE_DROPDOWN

|

||||

global FACE_DETECTOR_MARGIN_SLIDER

|

||||

global FACE_DETECTOR_ANGLES_CHECKBOX_GROUP

|

||||

global FACE_DETECTOR_SCORE_SLIDER

|

||||

|

||||

face_detector_size_dropdown_options : ComponentOptions =\

|

||||

{

|

||||

'label': translator.get('uis.face_detector_size_dropdown'),

|

||||

'value': state_manager.get_item('face_detector_size')

|

||||

}

|

||||

if state_manager.get_item('face_detector_size') in facefusion.choices.face_detector_set[state_manager.get_item('face_detector_model')]:

|

||||

face_detector_size_dropdown_options['choices'] = facefusion.choices.face_detector_set[state_manager.get_item('face_detector_model')]

|

||||

with gradio.Row():

|

||||

FACE_DETECTOR_MODEL_DROPDOWN = gradio.Dropdown(

|

||||

label = translator.get('uis.face_detector_model_dropdown'),

|

||||

choices = facefusion.choices.face_detector_models,

|

||||

value = state_manager.get_item('face_detector_model')

|

||||

)

|

||||

FACE_DETECTOR_SIZE_DROPDOWN = gradio.Dropdown(**face_detector_size_dropdown_options)

|

||||

FACE_DETECTOR_MARGIN_SLIDER = gradio.Slider(

|

||||

label = translator.get('uis.face_detector_margin_slider'),

|

||||

value = state_manager.get_item('face_detector_margin')[0],

|

||||

step = calculate_float_step(facefusion.choices.face_detector_margin_range),

|

||||

minimum = facefusion.choices.face_detector_margin_range[0],

|

||||

maximum = facefusion.choices.face_detector_margin_range[-1]

|

||||

)

|

||||